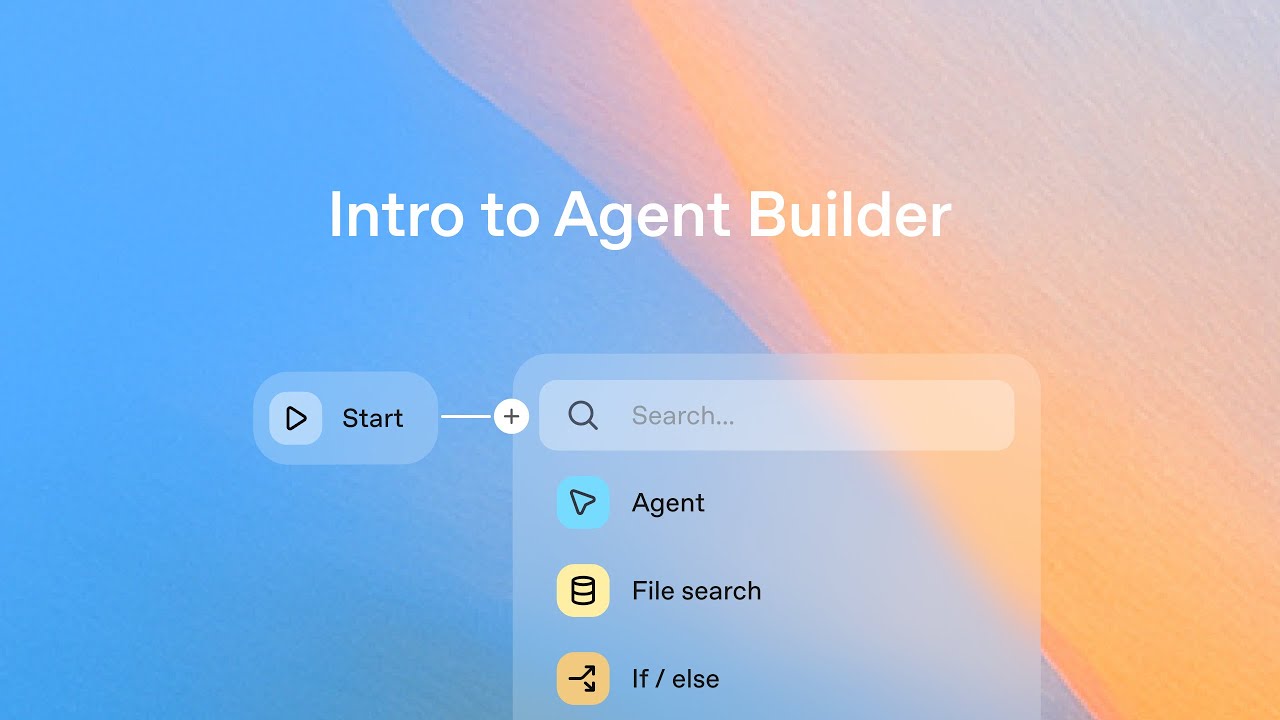

Build beautiful frontends with OpenAI Codex

Machine-readable: Markdown · JSON API · Site index

Описание видео

Codex’s multimodal abilities can help you design, iterate, and build beautiful frontends for your apps.

Channing Conger and Romain Huet show you how to use Codex cloud as your front-end design partner to build the perfect UI for your app.

Timestamps:

00:00 Intro

00:50 Whiteboarding design ideas

01:43 Creating Codex tasks from your phone

02:16 Sketching a new feature

03:00 Different ways to use multimodal features

04:06 Channing’s favorite examples

05:36 Checking in on our tasks

07:30 What Channing and team are building next

Try it yourself:

Codex cloud: chatgpt.com/codex

Learn more:

Codex: openai.com/codex

Developer docs: https://developers.openai.com/codex/cloud