Developer State Of The Union

Machine-readable: Markdown · JSON API · Site index

Описание видео

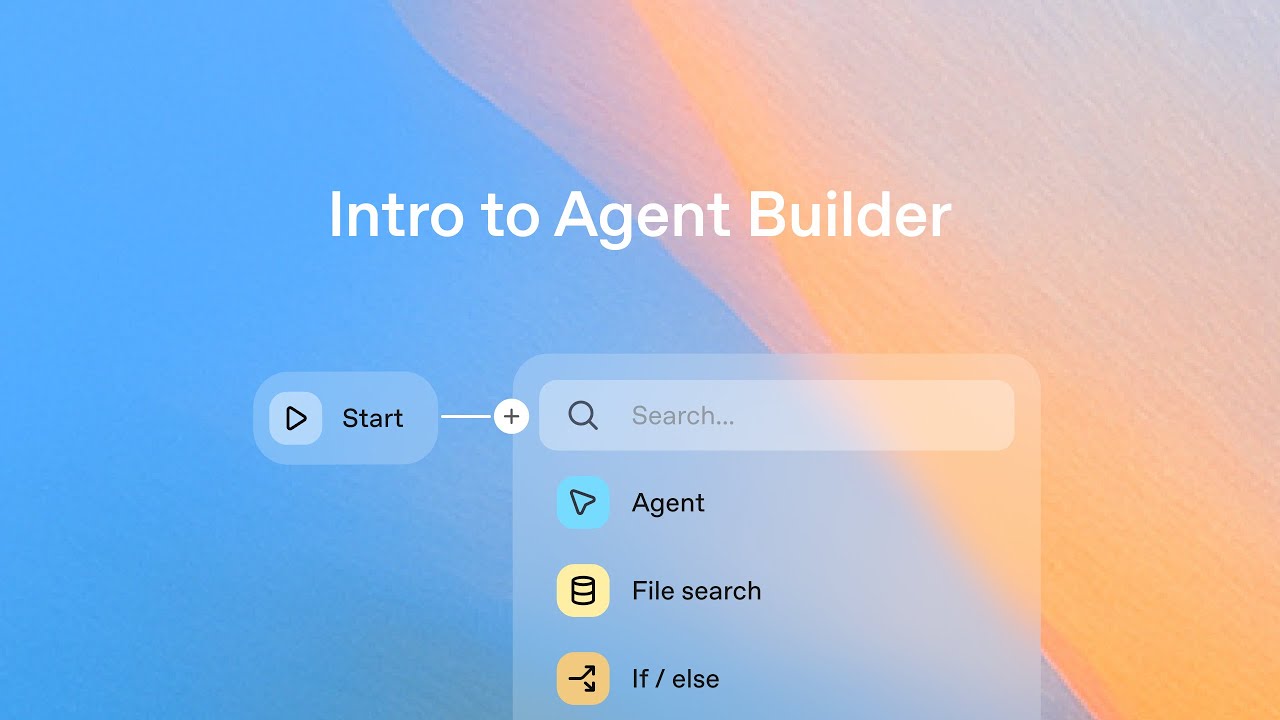

The developer experience is being rewritten with AI. The Developer State of the Union will explore how Codex, gpt-oss, and our API open up powerful ways to build, experiment, and scale. We’ll share the latest updates, demo new capabilities, and look ahead at what’s next for developers.