The Subagent Era Is Officially Here - Learn this Now

Machine-readable: Markdown · JSON API · Site index

Описание видео

OpenAI just released GPT-5.4 mini and nano - and they didn't just bill them as cheap models. They explicitly framed them as models built for the subagent era. That's a signal worth paying attention to!

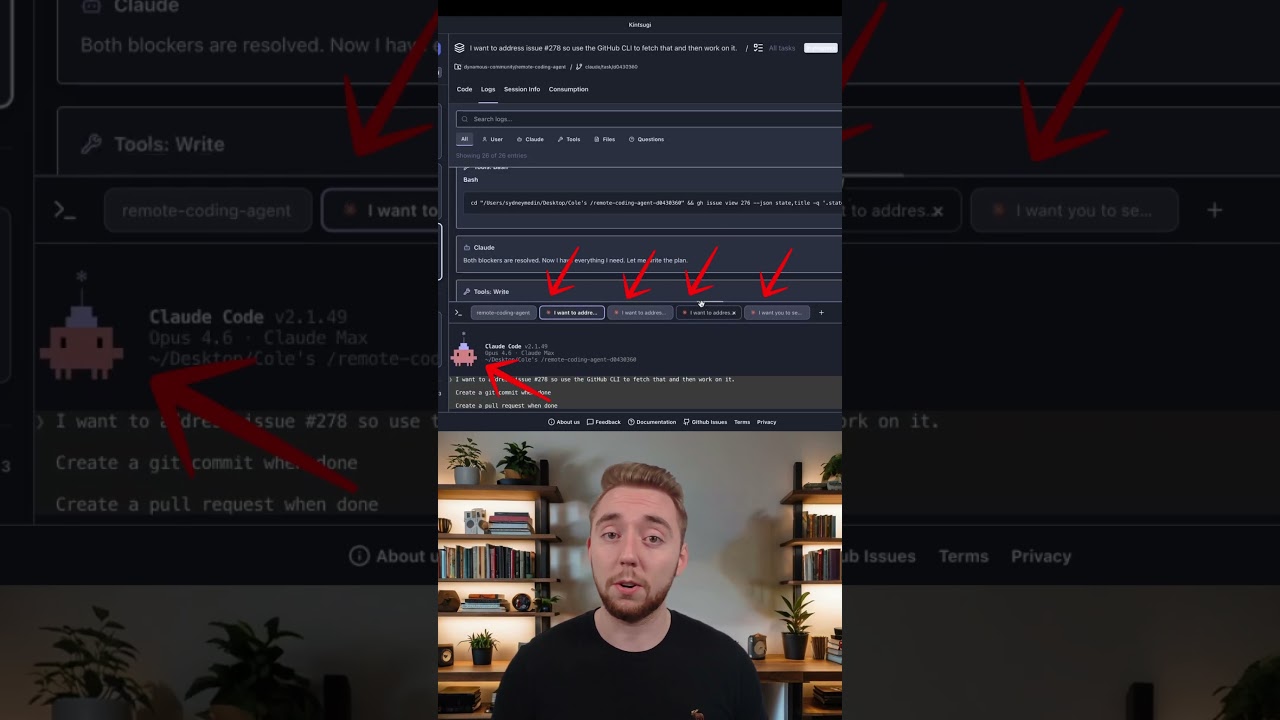

Because it's not just OpenAI. Every major coding agent - Claude Code, Codex, Cursor, Gemini CLI, VS Code Copilot, Mistral Vibe, Goose - is doubling down on subagents as a core part of how serious AI workflows get structured. The entire industry is converging on an architecture.

This video breaks down what subagents actually are, why they solve the context window problem in a bigger way than you would think, and what this industry shift means for how you build agentic workflows.

~~~~~~~~~~~~~~~~~~~~~~~~~~

- Try Oracle Database 26AI and build AI apps right where your data lives:

https://fandf.co/4sDnV9h

- Here is my Agentic RAG agent notebook:

https://github.com/oracle-devrel/oracle-ai-developer-hub/blob/main/notebooks/oracle_agentic_rag_hybrid_search.ipynb

~~~~~~~~~~~~~~~~~~~~~~~~~~

- Want a complete AI transformation? Come to the free AI Transformation Workshop I'm hosting with Lior Weinstein on April 2nd:

https://dynamous.ai/ai-transformation-workshop

- If you want to dive even deeper into building reliable and repeatable systems for AI coding, check out the Dynamous Community and Agentic Coding Course:

https://dynamous.ai/agentic-coding-course

~~~~~~~~~~~~~~~~~~~~~~~~~~

- GPT-5.4 mini and nano announcement:

https://openai.com/index/introducing-gpt-5-4-mini-and-nano/

~~~~~~~~~~~~~~~~~~~~~~~~~~

0:00 GPT 5.4 Mini and Nano

0:48 Entering the Sub-Agent Era

2:02 Performance and Benchmarks

3:11 Solving Context Rot

5:37 Industry Adoption of Sub-Agents

6:24 Warning: Research vs Implementation

7:57 Sponsor: Oracle AI Database

9:34 Demo: Researching Codebases

12:13 Using Sub-Agents in Codex

14:31 Summary and Final Tips

~~~~~~~~~~~~~~~~~~~~~~~~~~

Join me as I push the limits of what is possible with AI. I'll be uploading videos weekly - at least every Wednesday at 7:00 PM CDT!