Build Hour: Built-In Tools

Machine-readable: Markdown · JSON API · Site index

Описание видео

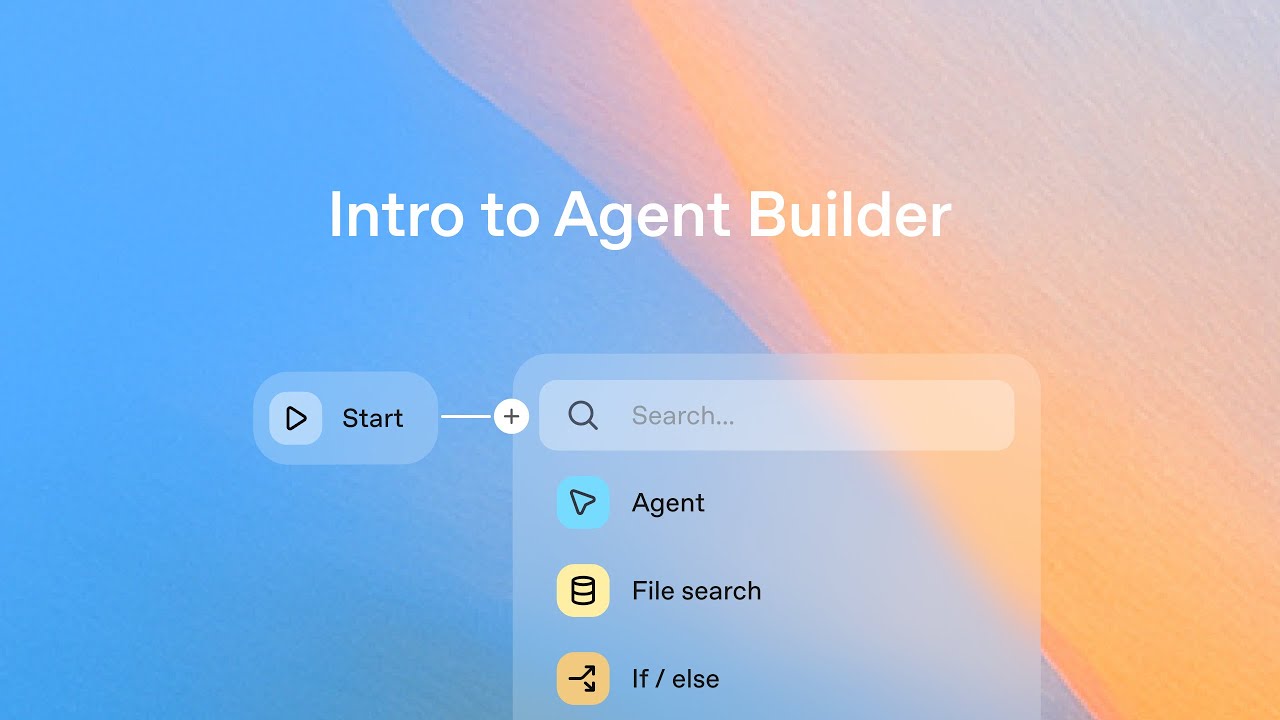

Built-in tools let you extend models out of the box without writing custom functions.

This Build Hour shows you how to use web search, file search, code interpreter, MCP, and image generation directly with the Responses API, with demos of adding these tools to real applications.

Katia Gil Guzman (Developer Experience) covers:

- What are built-in tools? How do they compare to function calling?

- Available tools: web search, file search, MCP, code interpreter, computer use, image generation

- Playground demo: experimenting with tools in (https://platform.openai.com/chat)

- Live demo: building a data exploration dashboard using MCP, web search, and code interpreter

- Why use built-in tools? Minimal coding, functionality out-of-the-box, and ability to combine tools

- Customer spotlight: Hebbia’s use of web search for finance and legal workflows (https://www.hebbia.com/)

- Live Q&A

👉 Follow along with the code repo: https://github.com/openai/build-hours

👉 Playground: https://platform.openai.com/chat

👉 Built-In Tools Guide: https://platform.openai.com/docs/guides/tools

👉 Sign up for upcoming live Build Hours: https://webinar.openai.com/buildhours