The New Stack and Ops for AI

Machine-readable: Markdown · JSON API · Site index

Описание видео

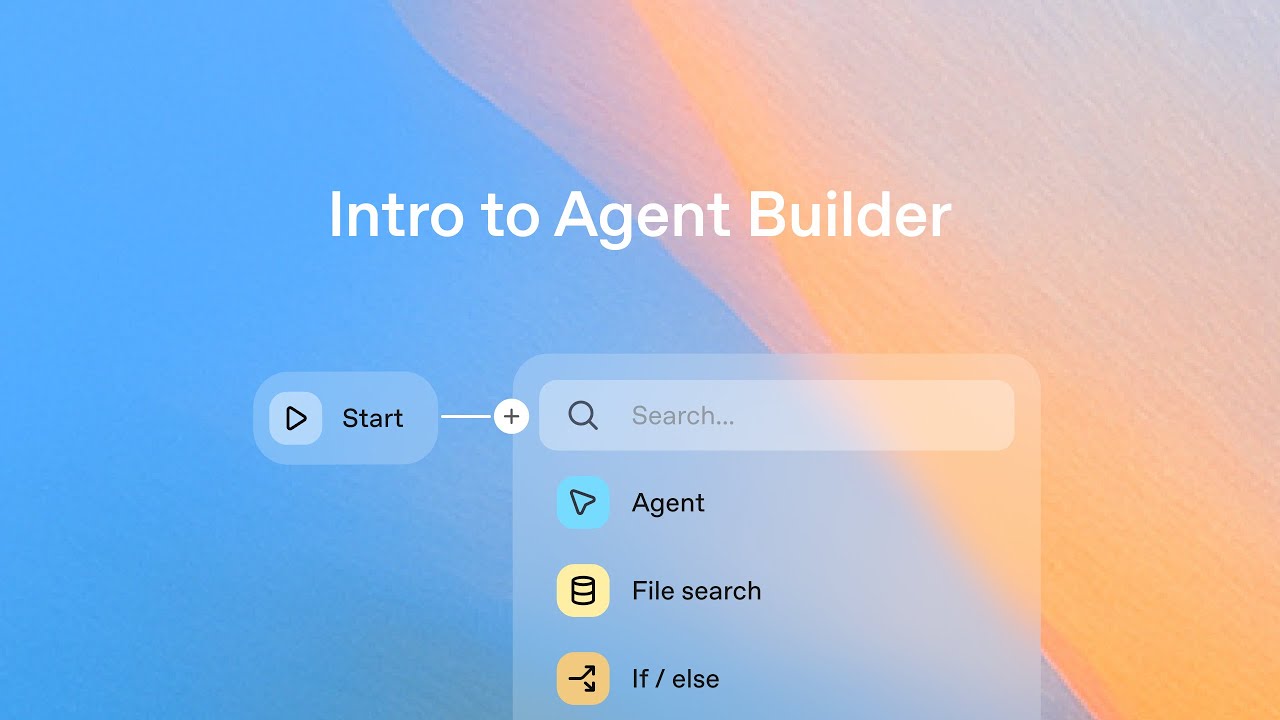

A new framework to navigate the unique considerations for scaling non-deterministic apps from prototype to production.

Speakers:

Shyamal Hitesh Anadkat,

Applied AI Engineer at @OpenAI

Sherwin Wu

Head of Engineering, Developer Platform at @OpenAI