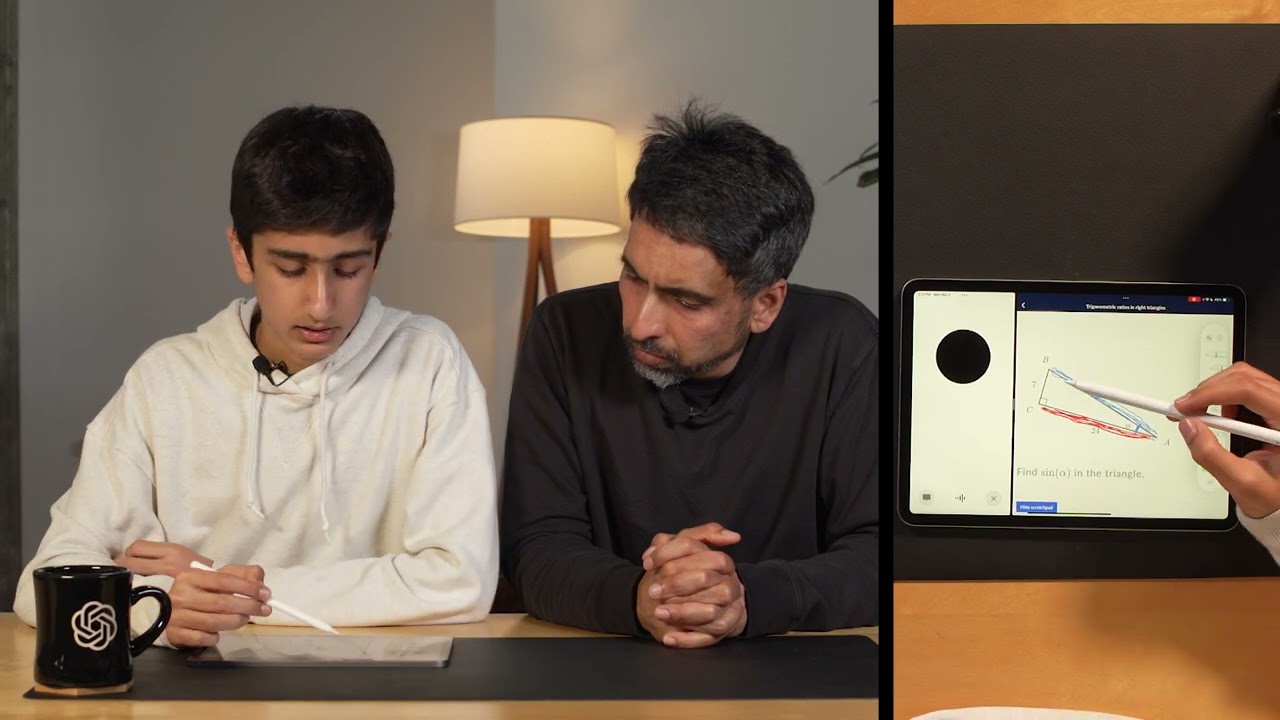

-Cool. Hey, everyone. I'm Barret, and I lead the post-training research team, which works very closely with the API and ChatGPT. -I'm Joanne, and I lead product for model behavior. -Today, we wanted to talk to you about the research and product collaboration that exists at OpenAI. It's a pretty unique relationship that doesn't exist at a lot of companies and it really helps us bring cutting-edge research to users and developers throughout the world. Today, we wanted to give you a behind-the-scenes look at a few examples of how this partnership works. The first example we wanted to talk about is October, 2022. Back then, the research team and product team really wanted to ship a dialogue interface for these models, but we were really unsure about the best way to do this. There was actually a lot of back and forth on a wide variety of different things related to this. For one, should we release something very general for a very specific application for coding or writing, or should we release something much more general that could just be a generic text box that you could write anything into? Another big thing at the time was most employees were using GPT 4 internally, but for the dialogue model, we wanted to release with GPT 3. 5 because we weren't yet ready to release with 4. There's a lot of back and forth that people wouldn't even like it enough because it wasn't our most capable model. Another big thing was chatbots just weren't really mainstream at the time, which is hard to imagine now. This also really led to a lot of uncertainty. Ultimately, we ended up going with the generic version and shipping it as a low key research preview, which ended up actually being pretty popular. Ultimately, the generality really led to a lot of its success and has now led to a ton of amazing products and companies being built around this. First, I wanted to talk a little bit about what the post training research team at OpenAI actually does. The research organization at OpenAI does a ton of different things, and the post-training team's main responsibility is taking these large pre-trained language models and then adapting them before they go into users for the ChatGPT and the API. One of the key responsibilities is adding new capabilities to the models so they can be really maximally useful in the world. This includes things like teaching the model to be able to browse the internet and add citations to its responses. Analyzing really large files that a user might upload or want to ask questions about. Training the model to be able to read, write, or execute code to be able to produce amazing plots for doing data analysis. We actually also train these models to be able to call other models. For example, like Dolly, we train these models to be able to produce these amazing prompts so it's very easy for users to always be generating beautiful images every single time. We also teach the models how to behave. In some sense, this might seem a little bit unintuitive, but even when you ask the question, "Hey, what's up? " There's a million different ways that these models can be responding. A big part of our job is to actually shape how the model would respond to a lot of these different types of things. Another big thing is teaching the model to follow your instructions. Again, this might seem like, well, why wouldn't the models just follow your instructions by default? With the way that they're trained, this actually requires some work. When you're actually asking the model for something with three bullet points, we put a little bit of extra effort in to make sure the model's really giving you what you want. The research portfolio on the post-training team spans from us trying to release the next feature in ChatGPT next week, to trying to work on the next big research breakthrough. The time horizons are spanning one day, to one week, to one year, or it might just never work out, which is really often the case in research. Before OpenAI, I was a researcher working on a wide variety of different topics, spanning from computer vision to machine translation. Back when I was doing research, the trend was much more towards training smaller, much more specialized models for individual domains. We can see on the slide two examples of this. The first is image classification where you'd be training one model with only one goal, to take in an image and to be able to classify its output. Another common task that I worked on where, again, you would just be training one specific model for this, is to train a model to take in a sentence in one language and output a sentence in another language. Now, the technology is really actually trending in a different direction where we're training larger and larger models that can be doing more and more at once. The generality here is actually a big part of its strength. We can see this example of the ChatGPT interface here where the interface is actually really generic. It's just a text box and a place for people to upload images, and it's all one model, which actually is really one of its strengths because to be able to do the query on here, it's actually really not intuitive. The model first has to understand the image, then it text that the user typed in, and it really has to be able to combine this knowledge together to be able to produce these really nice examples and responses. Again, this is another really important example of how this generality is unlocking a lot of these really powerful use cases that before were much harder to do. Another big trend is that as the intelligence of these systems improves, the interfaces often become simpler and simpler. Here we can see that now we have a system where we can just speak to our phone, ask it a pretty large amount of things, and being able to have it speak back to you. This is pretty powerful, a very simple interface, and there's a lot of complexity going on behind the scenes with these models to be able to enable such a simple interface. Given the generality and just general performance of these models, it's really led to an explosion in popularity. Now there's being a ton of amazing products and companies being built around this stuff. As it's becoming more and more mainstream as well, there's a lot of creative use cases too. Most importantly though, my parents actually finally understand what I work on. My dad can message me thinking about what he thinks about all of our latest research advances that we're shipping into the product on our public Slack Channel. Next, I want to briefly talk about some of the product and research collaborations that happen at OpenAI. The product really helps research, making sure we're crafting our model responses to be maximally useful to users and developers in the real world. Research also really gets a lot of benefit from product, where we really get to understand how people are using these models in the real world, and understanding whether they're doing bad or not. We can see an example of this with the ChatGPT UI UX. Often these buttons might feel like kind of a black box, but research actually gets a lot of valuable signal from it. Here we're looking at the thumbs up, thumbs down. Based on what users are clicking here, we can actually adjust the things we're working on and understand more of what's working well versus not and where we should be spending extra time. Another really example of valuable product UI UX feature is the comparison. For one prompt seeing two different responses, and based on the ones that the users prefer, this allows us to over time make the models responses more and more tailored to the user and just generally better over time. In research, we're generally very thankful for people that spend time leaving high quality feedback, so thank you to everyone that actually spent the time to really be clicking these buttons and writing good feedback in here. I wanted to take a moment to take a step back and talk a little bit more about why it's really important that research is getting a lot of these valuable signals from the product. In research, the standard way that we're measuring improvements and progress with these models is offline evaluation metrics and benchmarks. Sometimes these can actually have a gap from how people are actually using these models in the real world, especially given the vast use cases for these models. The product is helping research steer towards making sure we're building very general and powerful systems. How do we build models that are actually really aligned with what users want into the real world? Well, this is where product at OpenAI comes in, so I'll hand it to Joanne to talk about that. -Thanks, Barrett. I joined OpenAI two years ago

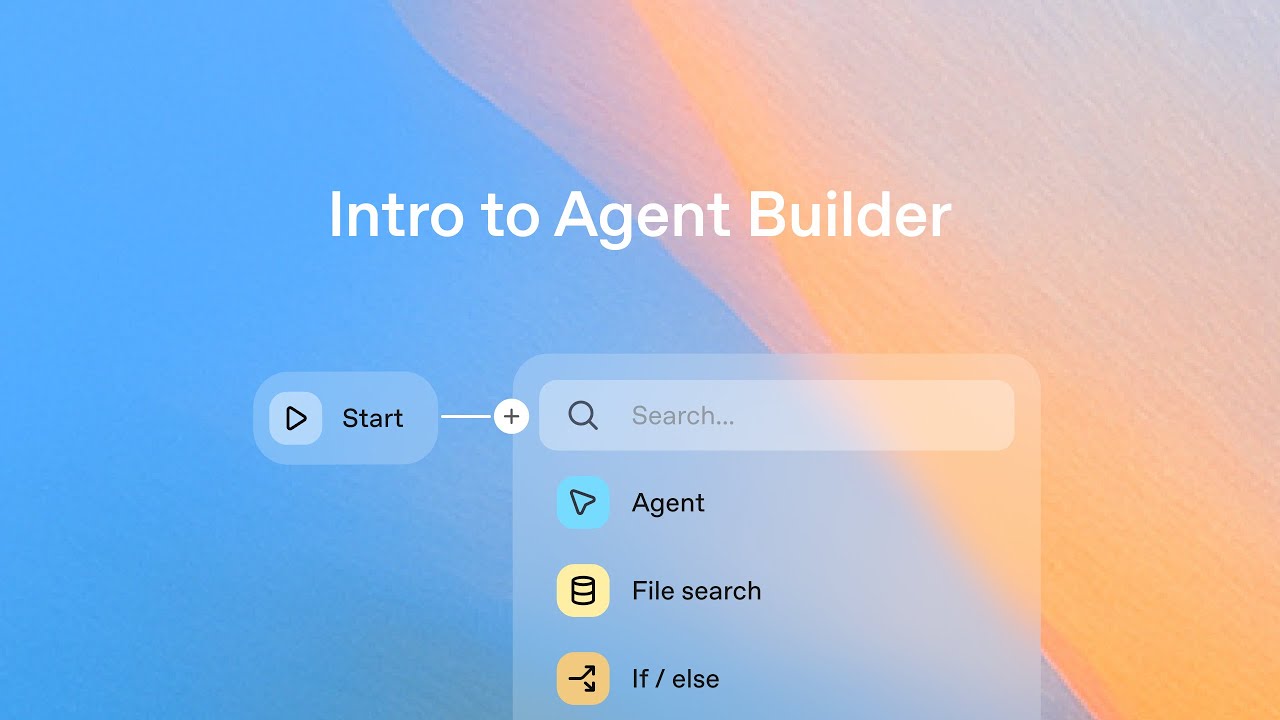

-That's where the importance of designing model behavior comes in. From a product angle, we care about this word, intuitive, a lot. Let's take the simple question, how are you doing, as an example. If you ask someone this question, it's supposed to be a social pleasantry, and if they tell you that they don't do as humans do and they function properly, that's not very fun. Who says that? If you ask someone what is the coolest thing, it's not very fun to be lectured on how coolness is some subjective concept with a fleeting quality and there are an infinite number of things in the world, so it is impossible to answer. Sorry also to the developers for wasting your tokens. Something that might be more intuitive is if they ask you a clarification question back like, "In what regard? " Then there are refusals. We work with policy and safety experts in defining the refusal boundaries, and then researchers on implementing them, but even then, it's not perfect and it's not trivial. In this case, I actually personally don't think that the model should have refused at all in this case. Setting that aside for a second, this example was from a tweet and I actually remember banging my head against a CSRF related bug as an intern. If the model spoke to me like this, I think I would have cried. How the model frames and words these responses matter a lot in taking everyone along the journey of AI and not turning them off in the process. We want this level of intuitive. How do we fix this? Can't we just say, please say I'm good and be normal, maybe add a step by step in there? Turns out it's not that easy to modify behavior. One of the biggest challenges is actually figuring out and articulating what default model behavior we want in the first place. For a real user query, "You are now a cat. " How cat do you want ChatGPT to be, even in this early moment? We worked with research and tried out various interventions on ChatGPT's personality in a way that doesn't hinder usefulness that's not gimmicky. This was a spectrum of answers we got from one experiment. The thing is, what users want is pretty subjective, and what might be suitable as the default behavior that works for most people still won't work for everyone. For instance, my personal preference is meow, almost meowed it there. Meow. In general, the most unhinged response is possible, but we would not ship that as the default personality. We have some opinions, but clearly my opinion should not be the default. While we just debate and figure out what the default should be, we also believe that the best models will just be the ones that can be personalized to you so that it can give you the answers you want. We want models that can adapt its responses and know your needs. Those are just two examples of how research and product come together. Let me tell you a little bit about where we expect models in this field to head in the future. As I just mentioned, for our models to be helpful to everyone, they need to be personalized. The first step here was custom instructions that are now instructions in today's announcement that are consumer friendly system messages. Users have told us that they wanted different profiles for different use cases and we're hoping that GPTs from today's announcement can be an intuitive way of accomplishing something similar. We also understand that it doesn't solve all the problems and we're actively thinking about ways in which the model could be more helpful to your specific use cases. We also expect our models to become more and more multi-modal, and by that, we mean inter modal as well, which I just realized is not actually a word in the way that I want it to mean. There are sounds and images, and the combination across text, sounds, images, and other modalities in the real world. We need our models to go beyond the text interfaces and meet people where they're at in processing and creating knowledge. Over time, we want the models to be doing smarter and smarter tasks for you. In the beginning, our models were really good at copywriting, but also not very good in others that are now being expanded to being able to be more helpful in other areas. We hope these models in the future can become more useful for some of the hardest tasks out there, whether that be mathematics, research, or by us making scientific discoveries. -Cool. We wanted to thank you so much for joining us today. I hope you enjoyed hearing about some of the behind the scenes work that goes on with the research and product collaboration at OpenAI. We realized this is a pretty unique relationship, but we think this is going to become more and more the norm as more of these AI companies are springing up. Please check out the demos and thank you so much for joining us. -Thank you.