The “Biggest” AI That Came Out Of Nowhere!

Machine-readable: Markdown · JSON API · Site index

Описание видео

❤️ Check out Lambda here and sign up for their GPU Cloud: https://lambda.ai/papers

Guide for using DeepSeek on Lambda:

https://docs.lambdalabs.com/education/large-language-models/deepseek-r1-ollama/?utm_source=two-minute-papers&utm_campaign=relevant-videos&utm_medium=video

Kimi K2:

https://moonshotai.github.io/Kimi-K2/

API: https://platform.moonshot.ai

Run it yourself locally: https://x.com/unslothai/status/1944780685409165589

Sources:

https://x.com/chetaslua/status/1943681568549052458

https://x.com/satvikps/status/1944861384573169929

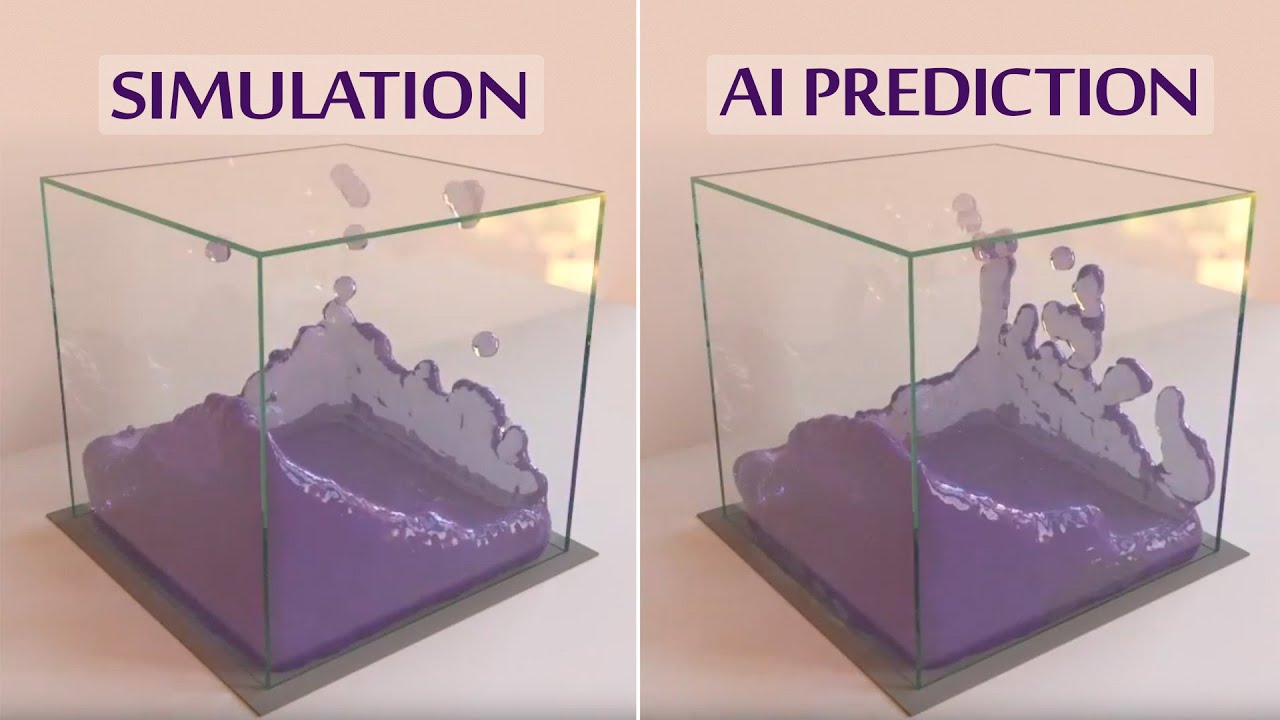

📝 My paper on simulations that look almost like reality is available for free here:

https://rdcu.be/cWPfD

Or this is the orig. Nature Physics link with clickable citations:

https://www.nature.com/articles/s41567-022-01788-5

🙏 We would like to thank our generous Patreon supporters who make Two Minute Papers possible:

Benji Rabhan, B Shang, Christian Ahlin, Gordon Child, John Le, Juan Benet, Kyle Davis, Loyal Alchemist, Lukas Biewald, Michael Tedder, Owen Skarpness, Richard Sundvall, Steef, Sven Pfiffner, Taras Bobrovytsky, Thomas Krcmar, Tybie Fitzhugh, Ueli Gallizzi

If you wish to appear here or pick up other perks, click here: https://www.patreon.com/TwoMinutePapers

My research: https://cg.tuwien.ac.at/~zsolnai/

X/Twitter: https://twitter.com/twominutepapers

Thumbnail design: Felícia Zsolnai-Fehér - http://felicia.hu