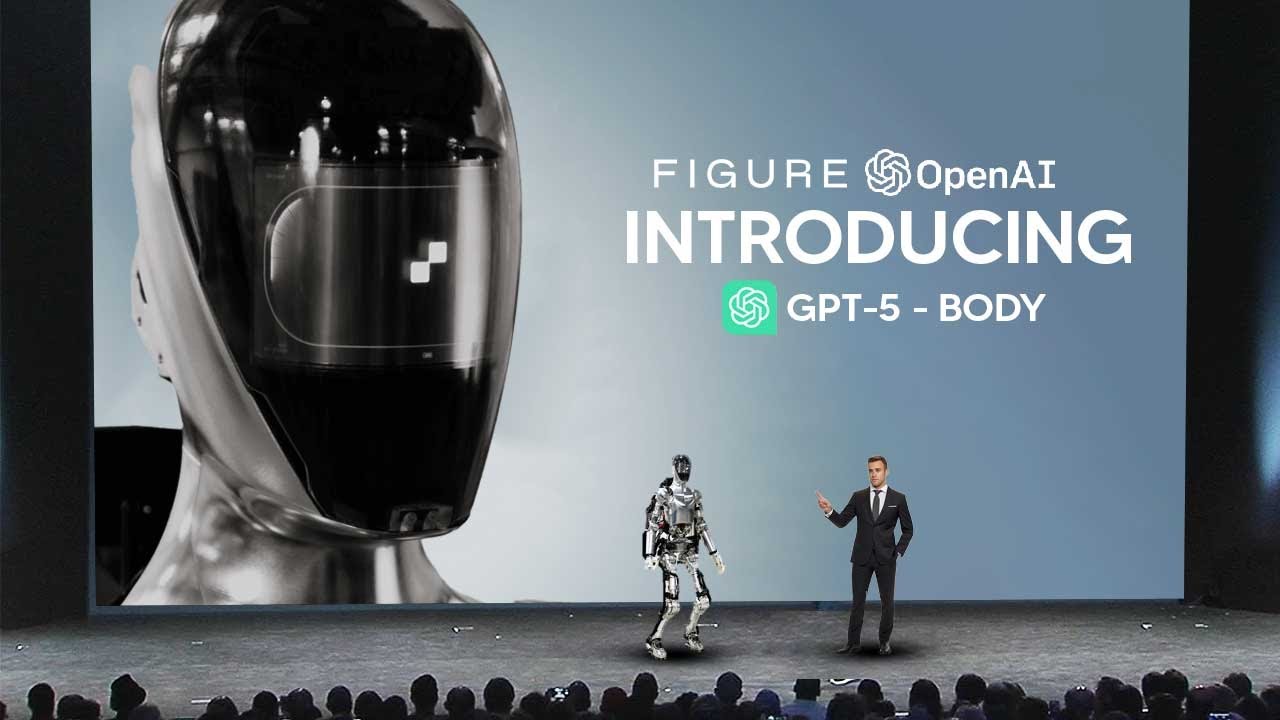

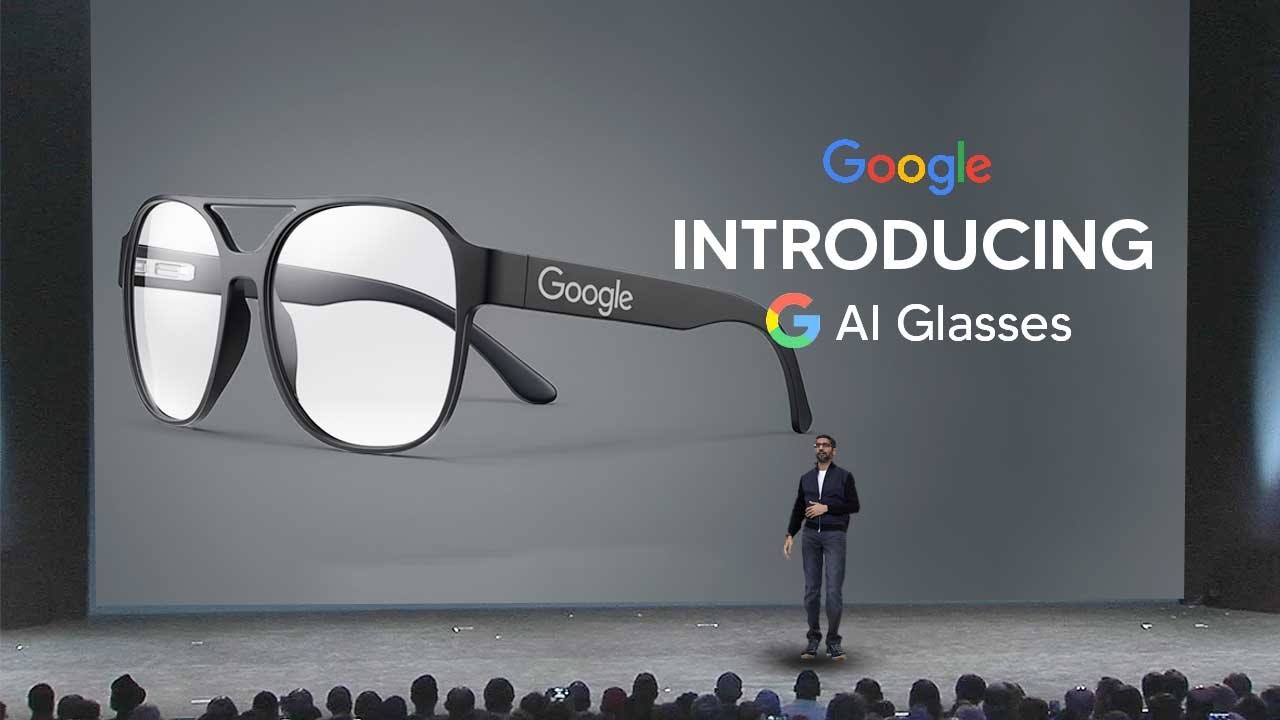

This Is How You Know AGI Is Close...

Machine-readable: Markdown · JSON API · Site index

Описание видео

Checkout Free Community: - https://www.skool.com/theaigridcommunity

🐤 Follow Me on Twitter https://twitter.com/TheAiGrid

🌐 Intersted In AI Business: https://www.youtube.com/@TheAIGRIDAcademy

Links From Todays Video:

https://x.com/ShaneLegg/status/2014345509675155639

Welcome to my channel where i bring you the latest breakthroughs in AI. From deep learning to robotics, i cover it all. My videos offer valuable insights and perspectives that will expand your knowledge and understanding of this rapidly evolving field. Be sure to subscribe and stay updated on my latest videos.

Was there anything i missed?

(For Business Enquiries) contact@theaigrid.com

Music Used

LEMMiNO - Cipher

https://www.youtube.com/watch?v=b0q5PR1xpA0

CC BY-SA 4.0

LEMMiNO - Encounters

https://www.youtube.com/watch?v=xdwWCl_5x2s

#LLM #Largelanguagemodel #chatgpt

#AI

#ArtificialIntelligence

#MachineLearning

#DeepLearning

#NeuralNetworks

#Robotics

#DataScience