Build an AI agent with LiveKit for real-time Speech-to-Text 🤖 | Full Python tutorial

Machine-readable: Markdown · JSON API · Site index

Описание видео

NOTE: A new version of LiveKit agents has been released since the recording of this video. Our updated blog has the new code blocks - https://www.assemblyai.com/blog/livekit-realtime-speech-to-text

🔑 Get an AssemblyAI API Key: https://www.assemblyai.com/dashboard/signup?utm_source=youtube&utm_medium=referral&utm_campaign=yt_ry_9

🧑💻 GitHub repo: https://github.com/oconnoob/realtime-stt-livekit-assemblyai

📃 Blog post: https://www.assemblyai.com/blog/livekit-realtime-speech-to-text/?utm_source=youtube&utm_medium=referral&utm_campaign=yt_ry_9

🟦 LiveKit Docs: https://docs.livekit.io/home/

Learn how to add an AI Agent for real-time Speech-to-Text to your applications in this comprehensive tutorial! I'll walk you through creating a LiveKit agent that instantly transcribes audio streams using AssemblyAI's Streaming Speech-to-Text API. Perfect for developers looking to enhance their real-time communication apps with AI capabilities.

🧠 What You'll Build:

✅ A complete LiveKit server setup connected to a web application

✅ A Python agent that processes audio streams in real-time

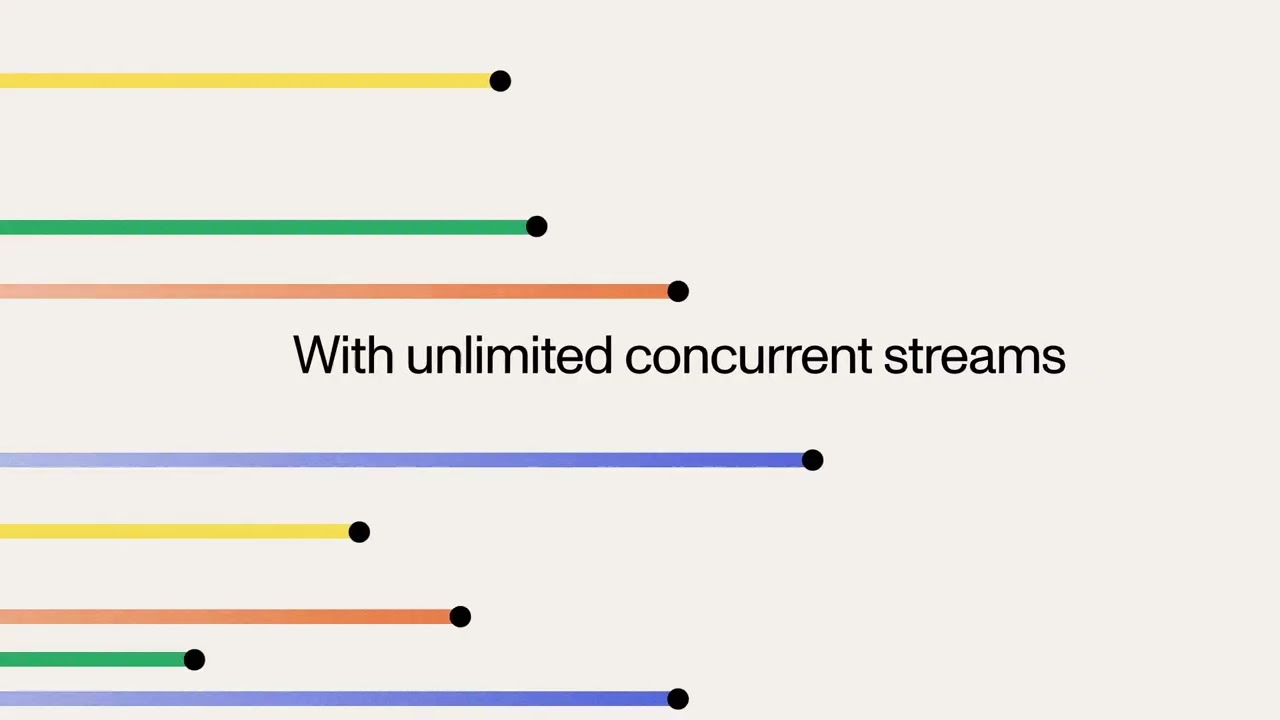

✅ Instant transcription delivery to all participants

✅ A working demo you can test and modify

🛠️ Technologies Covered:

LiveKit Cloud & Agents

Python Async Programming

AssemblyAI's Streaming API

WebRTC Fundamentals

This tutorial takes you from basic concepts to a fully functioning application. By the end, you'll understand how to implement real-time transcription in your own projects and have the code to prove it! Whether you're building a video conferencing app, creating accessibility features, or exploring AI integration, this guide has everything you need to get started.

▬▬▬▬▬▬▬▬▬▬▬▬ CONNECT ▬▬▬▬▬▬▬▬▬▬▬▬

🖥️ Website: https://www.assemblyai.com

🐦 Twitter: https://twitter.com/AssemblyAI

🦾 Discord: https://discord.gg/Cd8MyVJAXd

▶️ Subscribe: https://www.youtube.com/c/AssemblyAI?sub_confirmation=1

🔥 We're hiring! Check our open roles: https://www.assemblyai.com/careers

▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬▬

Timestamps:

00:00 - Intro

00:37 - How LiveKit works

01:20 - Step 1: Set up the LiveKit server

03:04 - Step 2: Set up the frontend application

03:58 - Step 3: Build the AI Agent

08:44 - Application demo!

09:43 - Build a chatbot in Python with Claude 3.5 Sonnet

#MachineLearning #DeepLearning #LiveKit #AssemblyAI #SpeechToText #AITranscription #WebRTC #PythonTutorial #RealTimeAI