Feedback Transformers: Addressing Some Limitations of Transformers with Feedback Memory (Explained)

Machine-readable: Markdown · JSON API · Site index

Описание видео

#ai #science #transformers

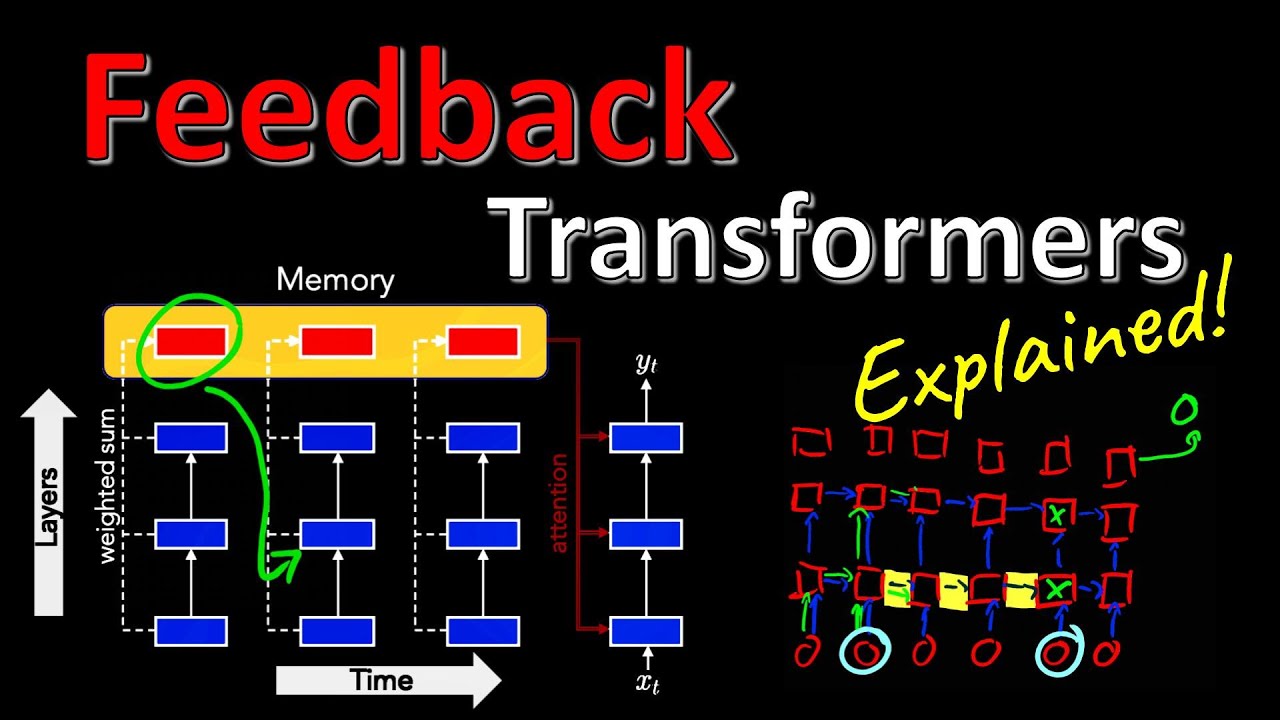

Autoregressive Transformers have taken over the world of Language Modeling (GPT-3). However, in order to train them, people use causal masking and sample parallelism, which means computation only happens in a feedforward manner. This results in higher layer information, which would be available, to not be used in the lower layers of subsequent tokens, and leads to a loss in the computational capabilities of the overall model. Feedback Transformers trade-off training speed for access to these representations and demonstrate remarkable improvements in complex reasoning and long-range dependency tasks.

OUTLINE:

0:00 - Intro & Overview

1:55 - Problems of Autoregressive Processing

3:30 - Information Flow in Recurrent Neural Networks

7:15 - Information Flow in Transformers

9:10 - Solving Complex Computations with Neural Networks

16:45 - Causal Masking in Transformers

19:00 - Missing Higher Layer Information Flow

26:10 - Feedback Transformer Architecture

30:00 - Connection to Attention-RNNs

36:00 - Formal Definition

37:05 - Experimental Results

43:10 - Conclusion & Comments

Paper: https://arxiv.org/abs/2002.09402

My video on Attention: https://youtu.be/iDulhoQ2pro

ERRATA: Sometimes I say "Switch Transformer" instead of "Feedback Transformer". Forgive me :)

Abstract:

Transformers have been successfully applied to sequential, auto-regressive tasks despite being feedforward networks. Unlike recurrent neural networks, Transformers use attention to capture temporal relations while processing input tokens in parallel. While this parallelization makes them computationally efficient, it restricts the model from fully exploiting the sequential nature of the input. The representation at a given layer can only access representations from lower layers, rather than the higher level representations already available. In this work, we propose the Feedback Transformer architecture that exposes all previous representations to all future representations, meaning the lowest representation of the current timestep is formed from the highest-level abstract representation of the past. We demonstrate on a variety of benchmarks in language modeling, machine translation, and reinforcement learning that the increased representation capacity can create small, shallow models with much stronger performance than comparable Transformers.

Authors: Angela Fan, Thibaut Lavril, Edouard Grave, Armand Joulin, Sainbayar Sukhbaatar

Links:

TabNine Code Completion (Referral): http://bit.ly/tabnine-yannick

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

Discord: https://discord.gg/4H8xxDF

BitChute: https://www.bitchute.com/channel/yannic-kilcher

Minds: https://www.minds.com/ykilcher

Parler: https://parler.com/profile/YannicKilcher

LinkedIn: https://www.linkedin.com/in/yannic-kilcher-488534136/

BiliBili: https://space.bilibili.com/1824646584

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannickilcher

Patreon: https://www.patreon.com/yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n