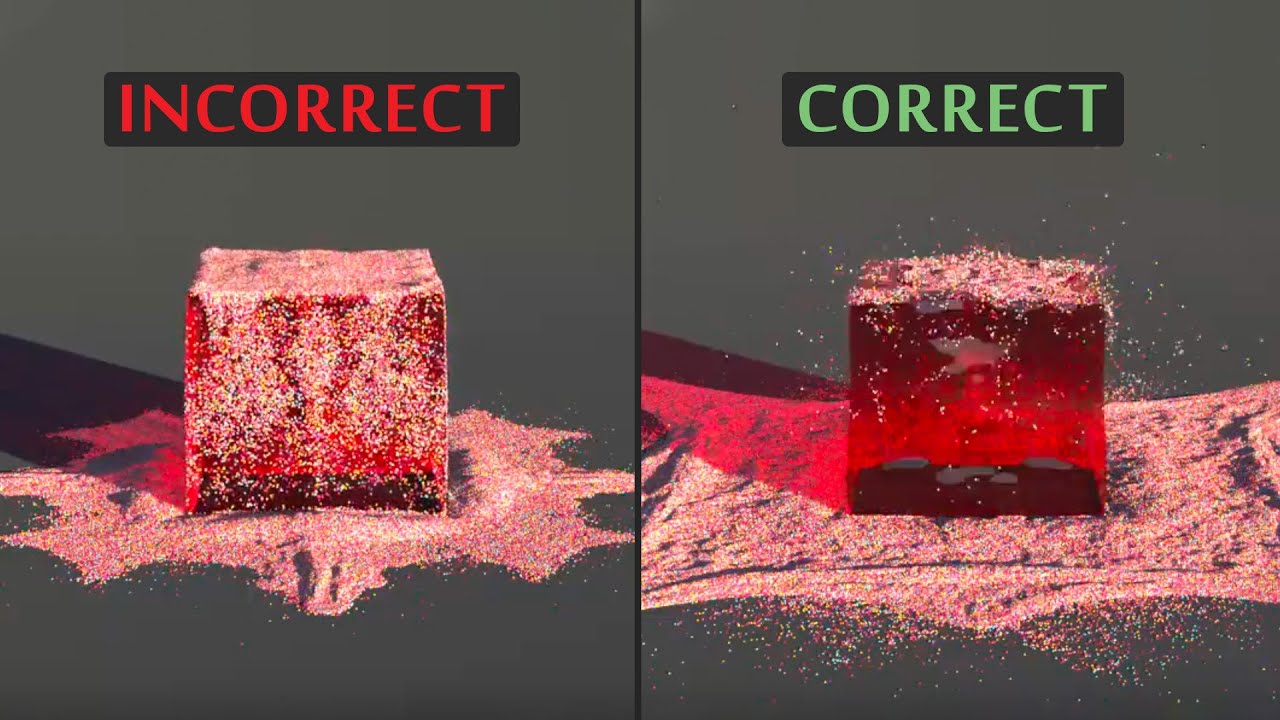

New AI: Photos Go In, Reality Comes Out! 🌁

Machine-readable: Markdown · JSON API · Site index

Описание видео

❤️ Check out Lambda here and sign up for their GPU Cloud: https://lambdalabs.com/papers

📝 The paper "ADOP: Approximate Differentiable One-Pixel Point Rendering" is available here:

https://arxiv.org/abs/2110.06635

❤️ Watch these videos in early access on our Patreon page or join us here on YouTube:

- https://www.patreon.com/TwoMinutePapers

- https://www.youtube.com/channel/UCbfYPyITQ-7l4upoX8nvctg/join

🙏 We would like to thank our generous Patreon supporters who make Two Minute Papers possible:

Aleksandr Mashrabov, Alex Haro, Andrew Melnychuk, Angelos Evripiotis, Benji Rabhan, Bryan Learn, Christian Ahlin, Eric Martel, Gordon Child, Ivo Galic, Jace O'Brien, Javier Bustamante, John Le, Jonas, Kenneth Davis, Klaus Busse, Lorin Atzberger, Lukas Biewald, Matthew Allen Fisher, Mark Oates, Michael Albrecht, Michael Tedder, Nikhil Velpanur, Owen Campbell-Moore, Owen Skarpness, Rajarshi Nigam, Ramsey Elbasheer, Steef, Taras Bobrovytsky, Thomas Krcmar, Timothy Sum Hon Mun, Torsten Reil, Tybie Fitzhugh, Ueli Gallizzi.

If you wish to appear here or pick up other perks, click here: https://www.patreon.com/TwoMinutePapers

Thumbnail background design: Felícia Zsolnai-Fehér - http://felicia.hu

Meet and discuss your ideas with other Fellow Scholars on the Two Minute Papers Discord: https://discordapp.com/invite/hbcTJu2

Károly Zsolnai-Fehér's links:

Instagram: https://www.instagram.com/twominutepapers/

Twitter: https://twitter.com/twominutepapers

Web: https://cg.tuwien.ac.at/~zsolnai/