Dynamical Distance Learning for Semi-Supervised and Unsupervised Skill Discovery

Machine-readable: Markdown · JSON API · Site index

Описание видео

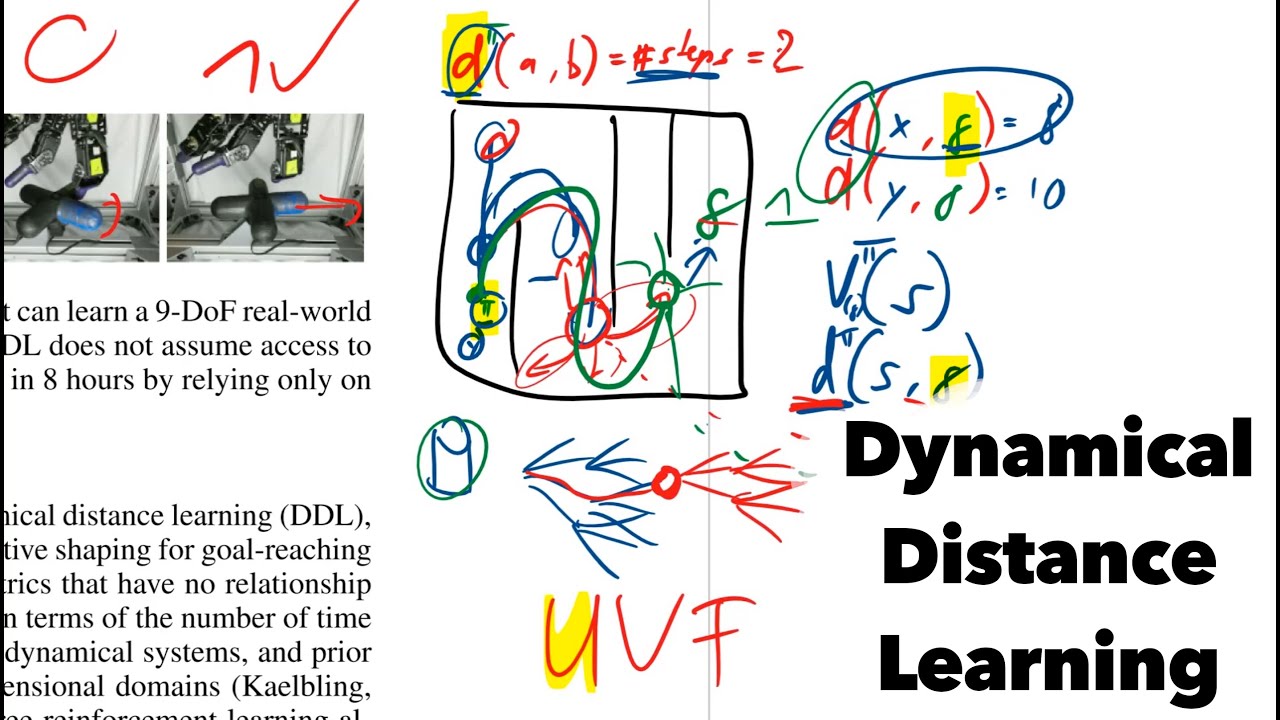

DDL is an auxiliary task for an agent to learn distances between states in episodes. This can then be used further to improve the agent's policy learning procedure.

Paper: https://arxiv.org/abs/1907.08225

Blog: https://sites.google.com/view/dynamical-distance-learning/home

Abstract:

Reinforcement learning requires manual specification of a reward function to learn a task. While in principle this reward function only needs to specify the task goal, in practice reinforcement learning can be very time-consuming or even infeasible unless the reward function is shaped so as to provide a smooth gradient towards a successful outcome. This shaping is difficult to specify by hand, particularly when the task is learned from raw observations, such as images. In this paper, we study how we can automatically learn dynamical distances: a measure of the expected number of time steps to reach a given goal state from any other state. These dynamical distances can be used to provide well-shaped reward functions for reaching new goals, making it possible to learn complex tasks efficiently. We show that dynamical distances can be used in a semi-supervised regime, where unsupervised interaction with the environment is used to learn the dynamical distances, while a small amount of preference supervision is used to determine the task goal, without any manually engineered reward function or goal examples. We evaluate our method both on a real-world robot and in simulation. We show that our method can learn to turn a valve with a real-world 9-DoF hand, using raw image observations and just ten preference labels, without any other supervision. Videos of the learned skills can be found on the project website: this https URL.

Authors: Kristian Hartikainen, Xinyang Geng, Tuomas Haarnoja, Sergey Levine

Links:

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

BitChute: https://www.bitchute.com/channel/yannic-kilcher

Minds: https://www.minds.com/ykilcher