This AI Learned To Create Dynamic Photos! 🌁

Machine-readable: Markdown · JSON API · Site index

Описание видео

❤️ Check out Weights & Biases and sign up for a free demo here: https://www.wandb.com/papers

❤️ Their report on this paper is available here: https://wandb.ai/wandb/xfields/reports/-Overview-X-Fields-Implicit-Neural-View-Light-and-Time-Image-Interpolation--Vmlldzo0MTY0MzM

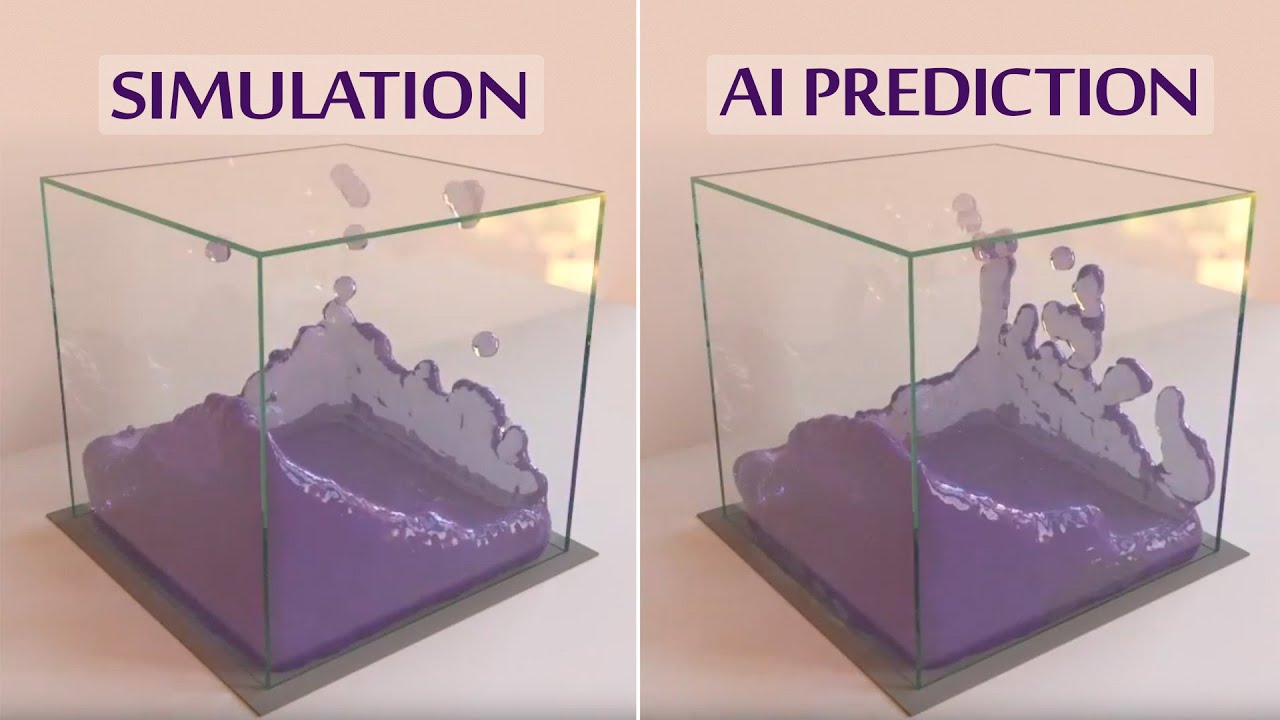

📝 The paper "X-Fields: Implicit Neural View-, Light- and Time-Image Interpolation" is available here:

http://xfields.mpi-inf.mpg.de/

📝 Our paper on neural rendering (and more!) is available here:

https://users.cg.tuwien.ac.at/zsolnai/gfx/gaussian-material-synthesis/

📝 Our earlier paper with high-resolution images for the caustics is available here:

https://users.cg.tuwien.ac.at/zsolnai/gfx/adaptive_metropolis/

🙏 We would like to thank our generous Patreon supporters who make Two Minute Papers possible:

Aleksandr Mashrabov, Alex Haro, Alex Serban, Alex Paden, Andrew Melnychuk, Angelos Evripiotis, Benji Rabhan, Bruno Mikuš, Bryan Learn, Christian Ahlin, Eric Haddad, Eric Lau, Eric Martel, Gordon Child, Haris Husic, Jace O'Brien, Javier Bustamante, Joshua Goller, Lorin Atzberger, Lukas Biewald, Matthew Allen Fisher, Michael Albrecht, Nikhil Velpanur, Owen Campbell-Moore, Owen Skarpness, Ramsey Elbasheer, Robin Graham, Steef, Taras Bobrovytsky, Thomas Krcmar, Torsten Reil, Tybie Fitzhugh.

If you wish to support the series, click here: https://www.patreon.com/TwoMinutePapers

Thumbnail background image credit: https://pixabay.com/images/id-820011/

Károly Zsolnai-Fehér's links:

Instagram: https://www.instagram.com/twominutepapers/

Twitter: https://twitter.com/twominutepapers

Web: https://cg.tuwien.ac.at/~zsolnai/