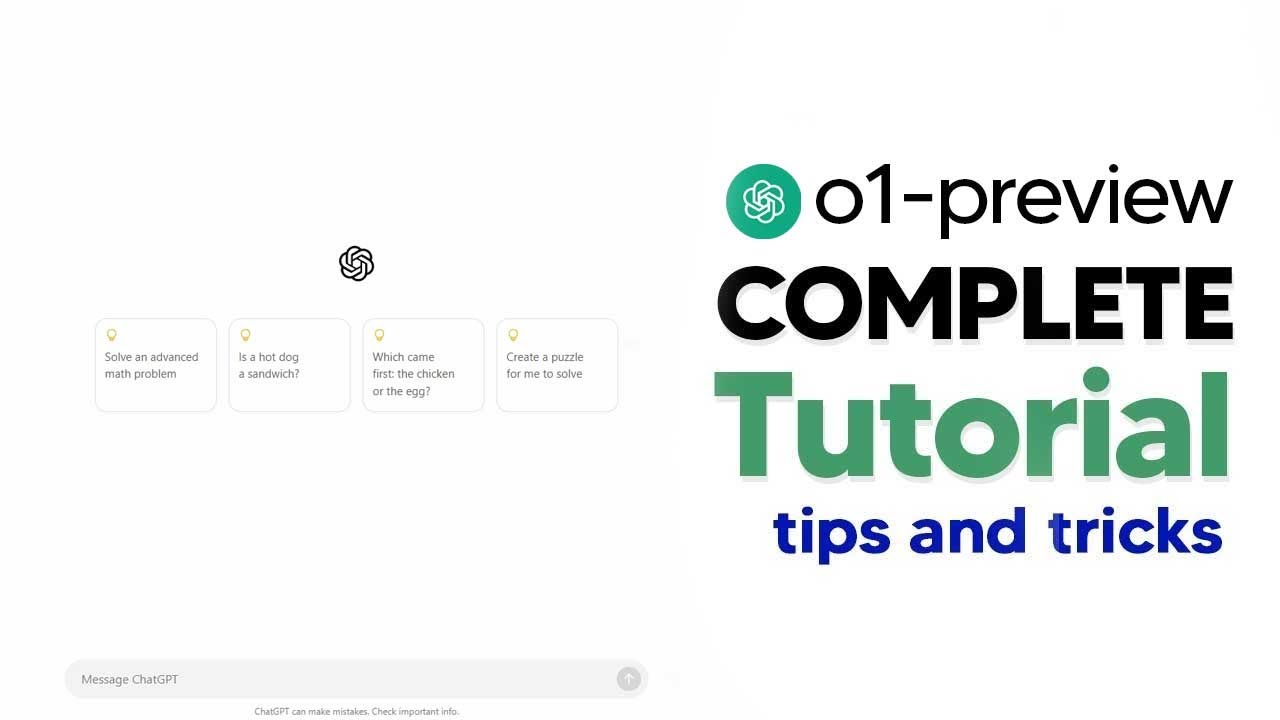

How To Use GPT-o1 Preview (o1- Preview Tutorial) Complete Guide With Tips and Tricks

Machine-readable: Markdown · JSON API · Site index

Описание видео

How To Use GPT-o1 Preview (o1- Preview Tutorial) Complete Guide With Tips and Tricks

Prepare for AGI with me - https://www.skool.com/postagiprepardness

🐤 Follow Me on Twitter https://twitter.com/TheAiGrid

🌐 Checkout My website - https://theaigrid.com/

Links From Todays Video:

https://platform.openai.com/docs/guides/reasoning?reasoning-prompt-examples=coding-planning

00:00 - Introduction to OpenAI's O1 series models

00:27 - Comparison between O1-preview and O1-mini

01:00 - Unique features of O1 models, including reasoning tokens

01:24 - Limitations of O1 models in beta

01:43 - OpenAI's advice on prompting for O1 models

02:57 - Keeping prompts simple and direct

03:58 - Avoiding chain of thought prompting

05:09 - Using delimiters for clarity in prompts

06:27 - Limiting context from external sources

07:58 - Comparison between O1-preview and O1-mini

10:01 - Use cases for O1-mini

11:19 - Examples of using the models

13:26 - Coding example: Snake game in Python

14:46 - Note on model's reasoning token

Welcome to my channel where i bring you the latest breakthroughs in AI. From deep learning to robotics, i cover it all. My videos offer valuable insights and perspectives that will expand your knowledge and understanding of this rapidly evolving field. Be sure to subscribe and stay updated on my latest videos.

Was there anything i missed?

(For Business Enquiries) contact@theaigrid.com

#LLM #Largelanguagemodel #chatgpt

#AI

#ArtificialIntelligence

#MachineLearning

#DeepLearning

#NeuralNetworks

#Robotics

#DataScience