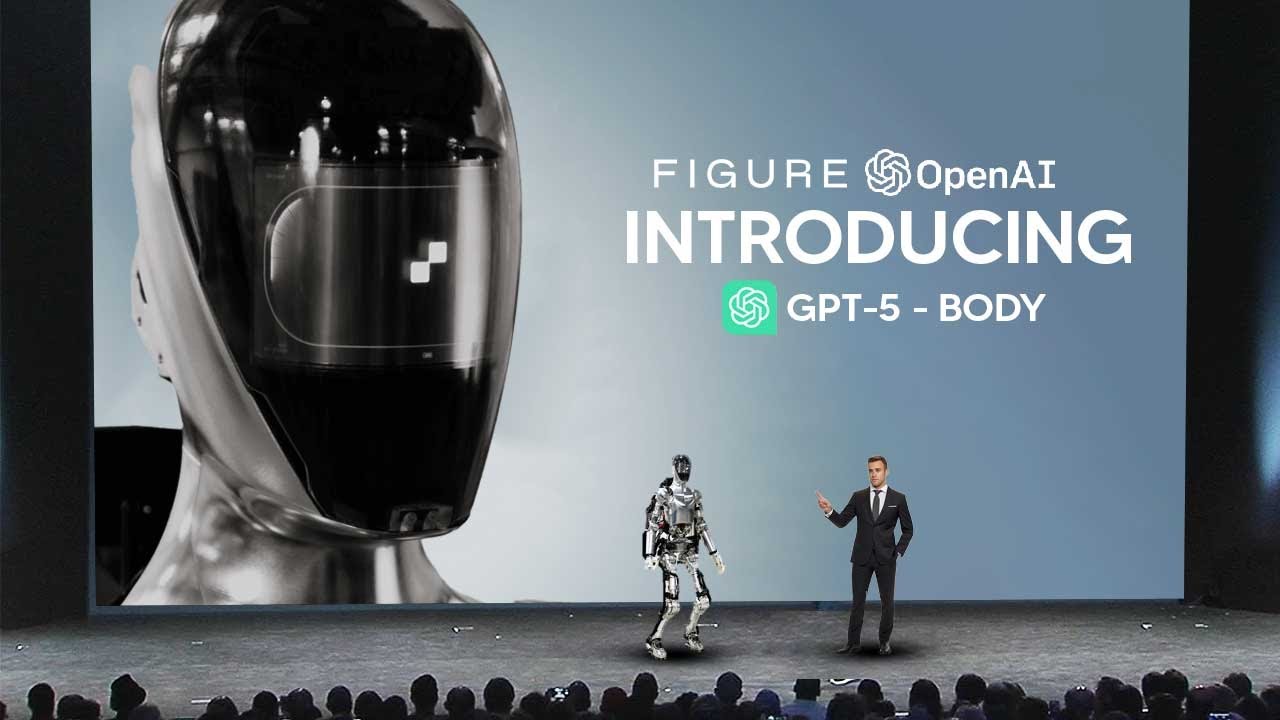

Sam Altman Just Revealed MORE DETAILS About GPT-5 and AGI

Machine-readable: Markdown · JSON API · Site index

Описание видео

💬 Access GPT-4 ,Claude-2 and more - chat.forefront.ai/?ref=theaigrid

🎤 Use the best AI Voice Creator - elevenlabs.io/?from=partnerscott3908

✉️ Join Our Weekly Newsletter - https://mailchi.mp/6cff54ad7e2e/theaigrid

🐤 Follow us on Twitter https://twitter.com/TheAiGrid

🌐 Checkout Our website - https://theaigrid.com/

https://www.youtube.com/watch?v=QFXp_TU-bO8&pp=ygUVU0FNIEFMVE1BTiBEQVZPUyBUQUxL

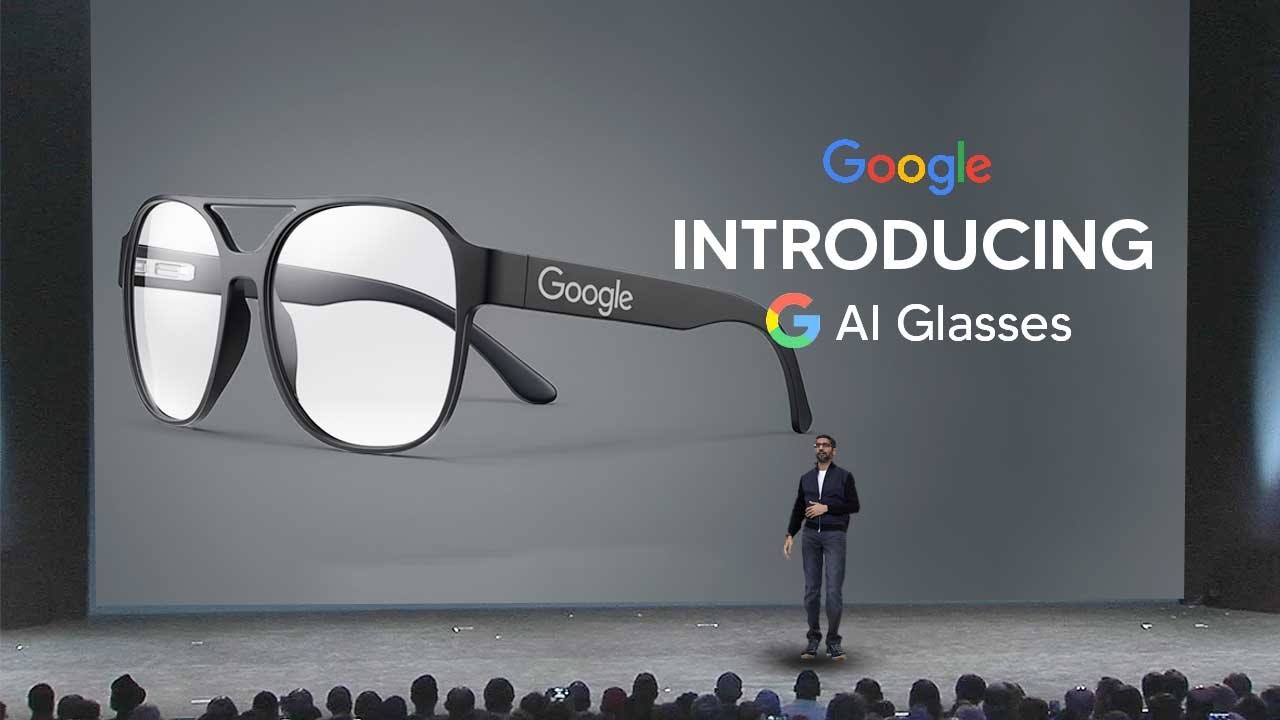

Welcome to our channel where we bring you the latest breakthroughs in AI. From deep learning to robotics, we cover it all. Our videos offer valuable insights and perspectives that will expand your knowledge and understanding of this rapidly evolving field. Be sure to subscribe and stay updated on our latest videos.

Was there anything we missed?

(For Business Enquiries) contact@theaigrid.com

#LLM #Largelanguagemodel #chatgpt

#AI

#ArtificialIntelligence

#MachineLearning

#DeepLearning

#NeuralNetworks

#Robotics

#DataScience