HUGE AI NEWS #16 DALLE-3 , New BARD, Midjourney 3D, New Self Driving, Youtube AI and More

Machine-readable: Markdown · JSON API · Site index

Описание видео

https://twitter.com/unusual_whales/status/1702122741829169302

https://twitter.com/_akhaliq/status/1701435537356124200

https://mv-dream.github.io/index.html

https://twitter.com/JaimeYassif/status/1702448587949453400

https://twitter.com/DrJimFan/status/1702718067191824491

https://www.youtube.com/watch?v=514IZJENQ3s

Copilot : https://twitter.com/_akhaliq/status/1704916883164614761

Midjourney 3d: https://twitter.com/nickfloats/status/1702721761790357733

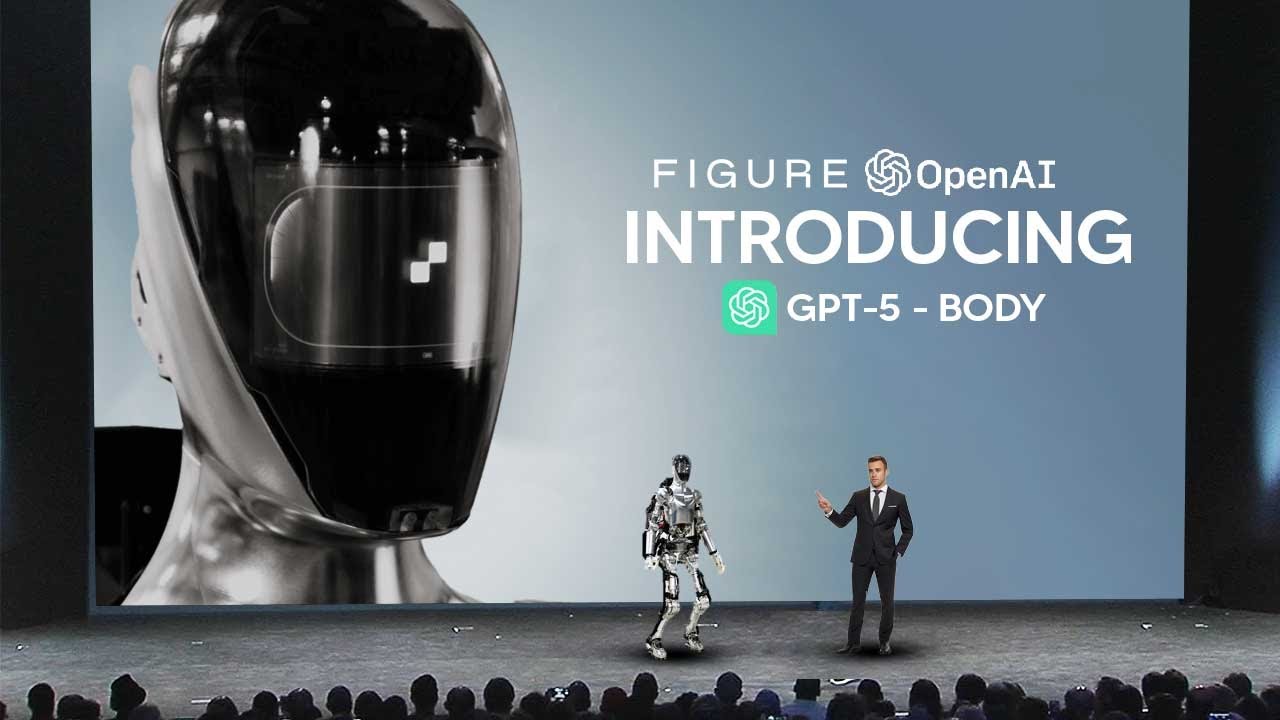

Next GPT- https://twitter.com/_akhaliq/status/1701435537356124200

AI senate- https://twitter.com/unusual_whales/status/1702122741829169302

Elon tweet - https://twitter.com/elonmusk/status/1704919985246601320

LLM Driving - https://twitter.com/DrJimFan/status/1702718067191824491

Youtube AI - https://twitter.com/bilawalsidhu/status/1704874993039913051

Bard - https://twitter.com/Google/status/1704119261566800278

Midjoruney vs dall -e

Youtube ai 2 = https://twitter.com/YouTube/status/1704865999357403143

Welcome to our channel where we bring you the latest breakthroughs in AI. From deep learning to robotics, we cover it all. Our videos offer valuable insights and perspectives that will expand your knowledge and understanding of this rapidly evolving field. Be sure to subscribe and stay updated on our latest videos.

Was there anything we missed?

(For Business Enquiries) contact@theaigrid.com

#LLM #Largelanguagemodel #chatgpt

#AI

#ArtificialIntelligence

#MachineLearning

#DeepLearning

#NeuralNetworks

#Robotics

#DataScience

#IntelligentSystems

#Automation

#TechInnovation