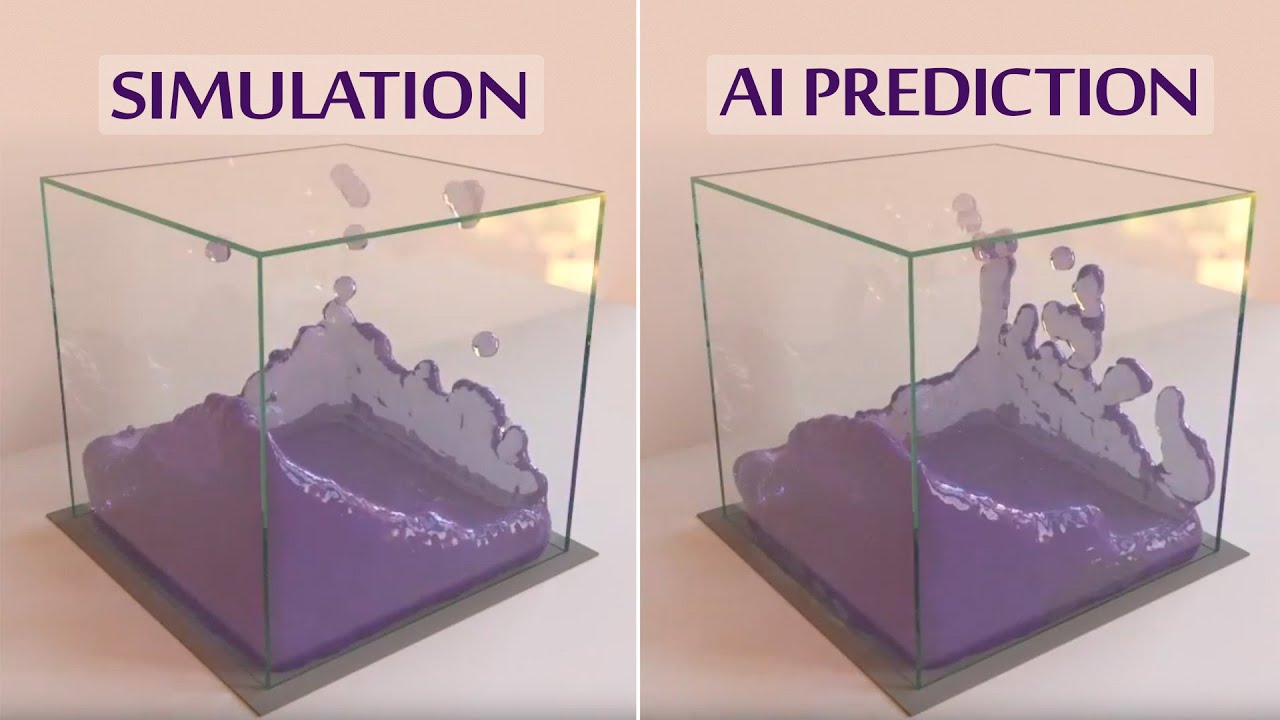

Hallucinating Images With Deep Learning | Two Minute Papers #74

Machine-readable: Markdown · JSON API · Site index

Описание видео

During our journeys in deep learning, we have seen techniques that can summarize photographs in entire sentences that actually make sense. This time, we are going to turn this process around and ask a deep learning system to "hallucinate", i.e., generate images according to sentences that we add as an input. The results are nothing short of insane!

_____________________________

The paper "Generative Adversarial Text to Image Synthesis" is available here:

http://arxiv.org/abs/1605.05396

Recommended for you:

Recurrent Neural Network Writes Sentences About Images - https://www.youtube.com/watch?v=e-WB4lfg30M

Deep Learning related Two Minute Papers episodes -

https://www.youtube.com/playlist?list=PLujxSBD-JXglGL3ERdDOhthD3jTlfudC2

WE WOULD LIKE TO THANK OUR GENEROUS PATREON SUPPORTERS WHO MAKE TWO MINUTE PAPERS POSSIBLE:

David Jaenisch, Sunil Kim, Julian Josephs.

https://www.patreon.com/TwoMinutePapers

We also thank Experiment for sponsoring our series. - https://experiment.com/

Subscribe if you would like to see more of these! - http://www.youtube.com/subscription_center?add_user=keeroyz

The thumbnail background image was created by C. P. Ewing - https://flic.kr/p/GDm4Jd

Splash screen/thumbnail design: Felícia Fehér - http://felicia.hu

Károly Zsolnai-Fehér's links:

Facebook → https://www.facebook.com/TwoMinutePapers/

Twitter → https://twitter.com/karoly_zsolnai

Web → https://cg.tuwien.ac.at/~zsolnai/