Separable Subsurface Scattering | Two Minute Papers #66

Machine-readable: Markdown · JSON API · Site index

Описание видео

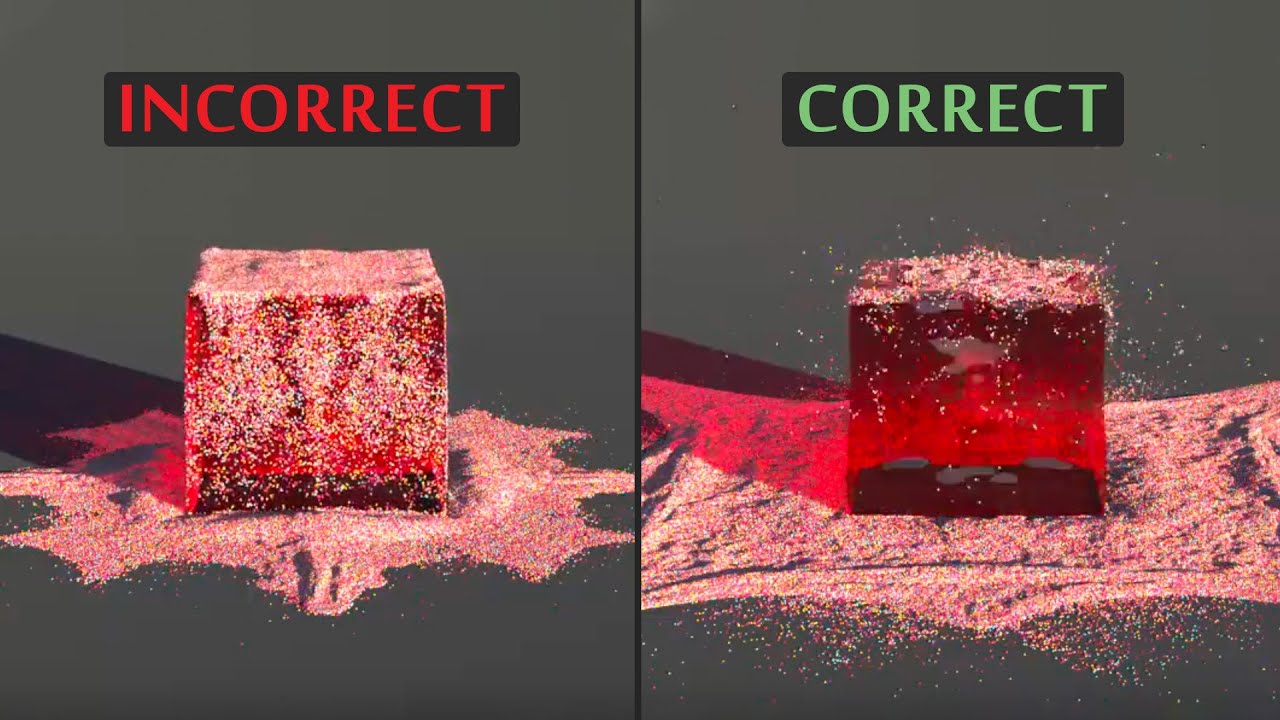

Separable Subsurface Scattering is a novel technique to add real-time subsurface light transport calculations for computer games and other real-time applications.

____________________________

The paper "Separable Subsurface Scattering" and its implementation is available here:

https://users.cg.tuwien.ac.at/zsolnai/gfx/separable-subsurface-scattering-with-activision-blizzard/

http://www.iryoku.com/separable-sss/

Recommended for you:

Ray Tracing / Subsurface Scattering @ Function 2015 - https://www.youtube.com/watch?v=qyDUvatu5M8

Separable Subsurface Scattering Unofficial Talk - https://www.youtube.com/watch?v=mU-5CsaPfsE

Separable Subsurface Scattering Implementation in Blender (thank you Lubos Lenco!):

http://www.blendernation.com/2016/05/02/separable-subsurface-scattering-game-engine-cycles/

http://luboslenco.com/notes/ssss/

WE WOULD LIKE TO THANK OUR GENEROUS SUPPORTERS WHO MAKE TWO MINUTE PAPERS POSSIBLE:

Sunil Kim.

https://www.patreon.com/TwoMinutePapers

Subscribe if you would like to see more of these! - http://www.youtube.com/subscription_center?add_user=keeroyz

Image credits:

Leaves - https://flic.kr/p/fGie2L

Snail - https://flic.kr/p/8wXFiC

Skin: Wikipedia

Extended credits (copied from the Acknowledgements section of the mentioned paper):

The authors want to thank the reviewers for their insightful comments; Infinity Realities, in particular Lee Perry-Smith, for his head model and for the Lauren model; the Institute of Creative Technologies at USC, in particular Paul Debevec, for the Ari and Bernardo models; and Bernardo Antoniazzi for letting us use his likeness. Furthermore, we want to thank the Stanford University Computer Graphics Laboratory for the Dragon model, and the following contributors from Blend Swap under CC-BY licence: longrender for the Dish model, metalix for the Green apple model, betomo16 for the Plant model, and PickleJones for the Grapes model. We also thank Felícia Fehér for editing the figures. This research has been partially funded by the European Commission, 7th Framework Programme, through projects GOLEM and VERVE, the Spanish Ministry of Economy and Competitiveness through project LIGHTSLICE, and project TAMA, and the Austrian Science Fund (FWF) through project no. P23700-N23.

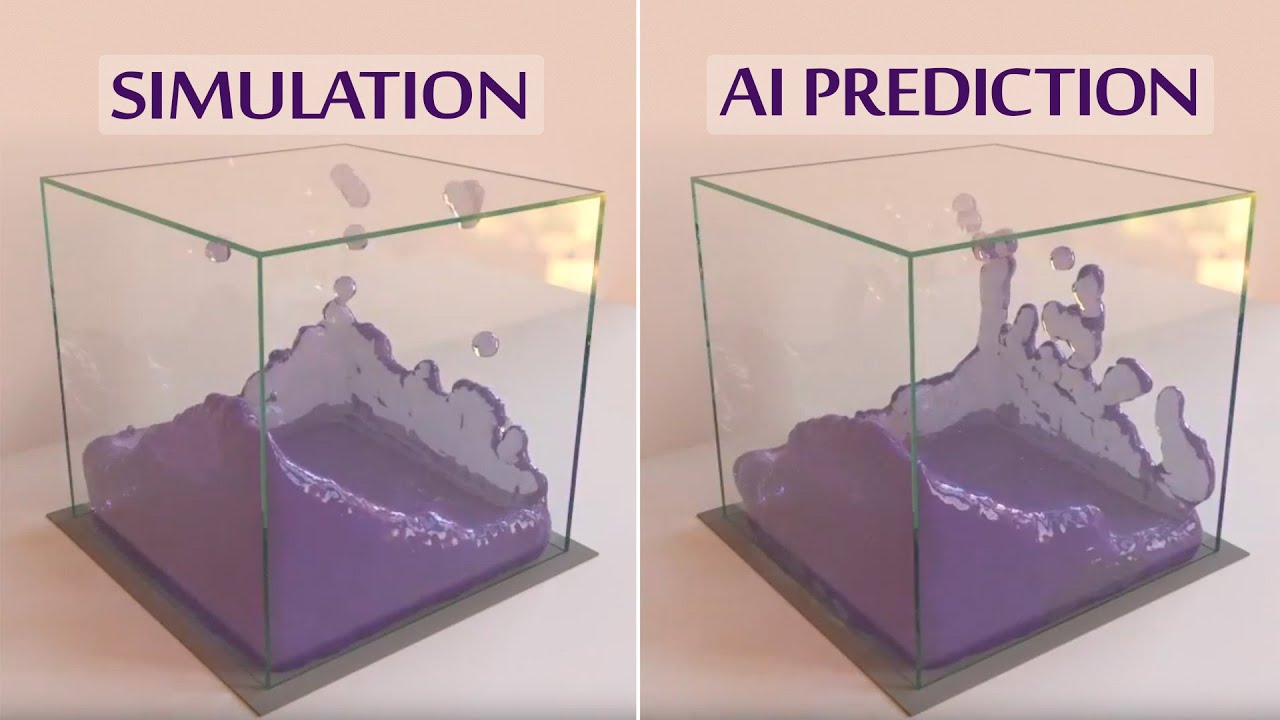

The thumbnail background image was taken from the corresponding paper linked above.

Splash screen/thumbnail design: Felícia Fehér - http://felicia.hu

Károly Zsolnai-Fehér's links:

Facebook → https://www.facebook.com/TwoMinutePapers/

Twitter → https://twitter.com/karoly_zsolnai

Web → https://cg.tuwien.ac.at/~zsolnai/