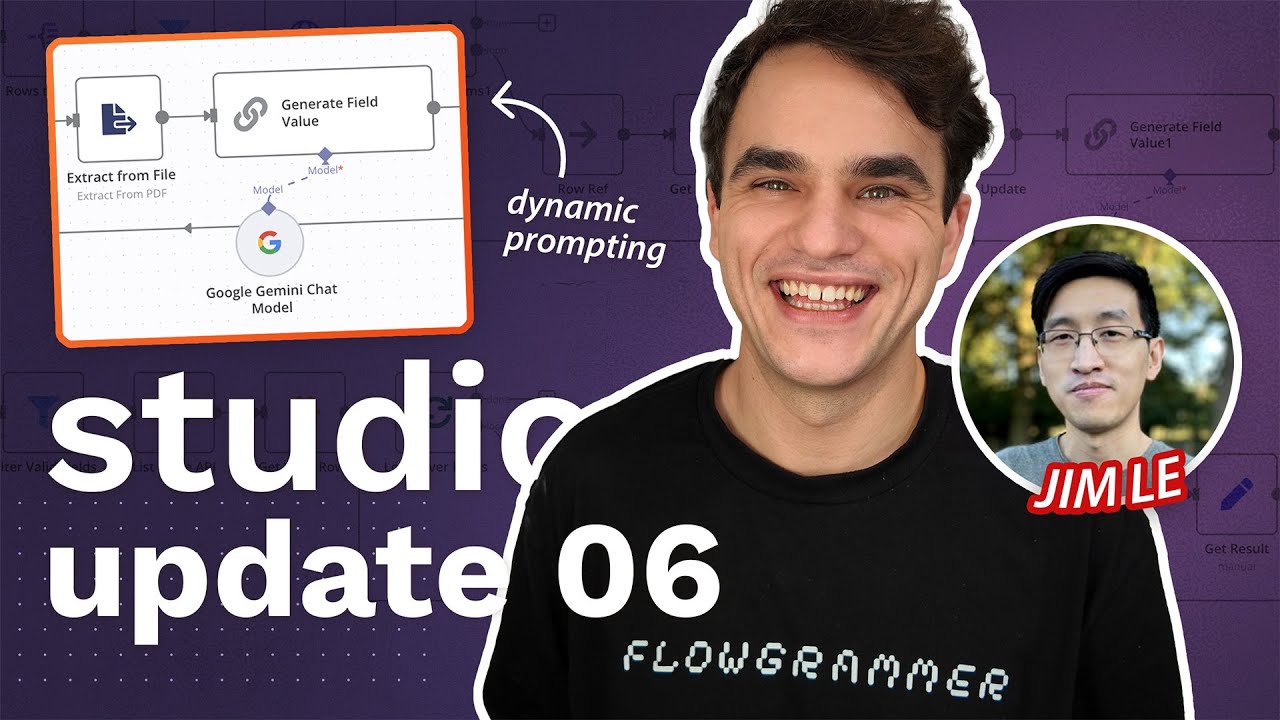

Studio Update #06: Fine Tuning to Tag Support Tickets? Plus Dynamic AI prompting via Spreadsheets

Machine-readable: Markdown · JSON API · Site index

Описание видео

In this episode, Prompt engineering for AI Agents tutorial dropped! Then Max kicks off a collab with n8n's Support team and Jim Le shows off a handy pattern for dynamically injecting prompts.

Follow Max on LinkedIn: https://www.linkedin.com/in/maxtkacz/

Here’s what’s inside:

🤖 Building AI Agents Pt3 is out: https://youtu.be/77Z07QnLlB8

⚙️ Fine-Tuning Tiny Models – Breaking ground on a Gmail ➡️ 3B self-hostable llm model in your own voice that can write drafts for you.

✂️ n8n Support AI Project – A behind-the-scenes peek at meeting with n8n's Support team to spec out a 'Tag Support Tickets' usecase in @zammadhq . Multi-shot prompting or fine tuning? Let's see!

♻️ Dynamic Prompts with Jim Lee – Learn how description metadata in platforms like Baserow lets end-users update system prompts on the fly, no developer needed.

Chapters

00:00 - Intro

00:58 - Studio Project Updates

03:24 - Kicking off Tagging Support Tickets Project

13:24 - Dynamic Prompts with Jim Lee

31:04 - Wrap up

🔗 Links and Resources:

Sign up at https://n8n.io and get 50% off for 12 months with coupon code MAX50 (apply after your free trial!)

https://community.n8n.io for help, inspiration, and connecting with fellow builders

Connect with Max on LinkedIn: https://www.linkedin.com/in/maxtkacz/

#aiagents

![n8n Quick Start Tutorial: Build Your First Workflow [2025]](/thumbnail/4cQWJViybAQ.jpg)

![One Click Connect n8n to AI Tools [Instance Level MCP]](/thumbnail/JihC9nR_DqQ.jpg)

![Building AI Agents: Chat Trigger, Memory, and System/User Messages Explained [Part 1]](/thumbnail/yzvLfHb0nqE.jpg)