Getting started with Weights & Biases for robotics

Machine-readable: Markdown · JSON API · Site index

Описание видео

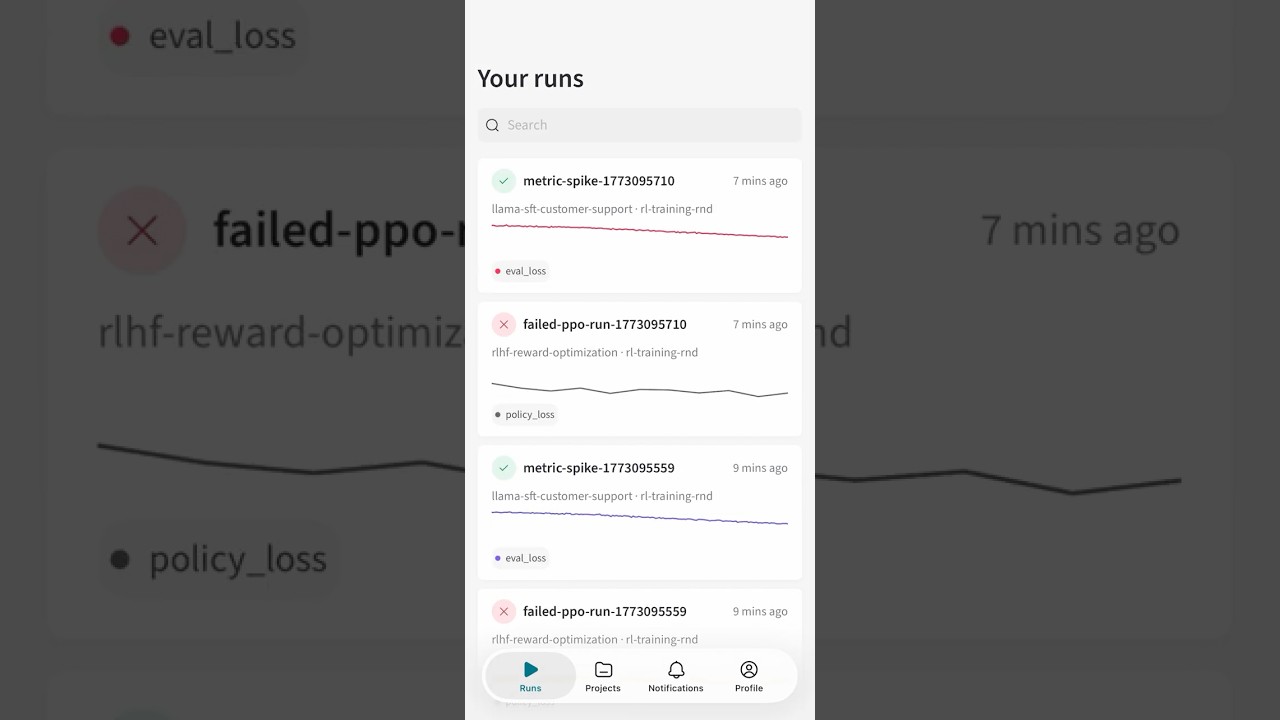

W&B Models helps AI teams track experiments, manage model artifacts, and collaborate across the entire AI model development lifecycle.

In this video, W&B AI Solutions Engineer Anu Vatsa demonstrates how teams optimize training and experiment tracking for advanced workflows from fine-tuning Vision-Language-Action (VLA) models to reinforcement learning for embodied AI.

You’ll see how to manage long-running training runs and hyperparameter sweeps by natively tracking parameters, metrics, rollout videos, and artifacts in a single workspace.

The demo also shows how rollout videos sync with training steps, making it easy to visualize robot performance and connect to your training metrics, making model checkpoints easier to evaluate.

Plus, learn how centralized artifact registries, automated reports, and real-time Slack alerts help teams stay aligned and move faster.

By the end, you’ll understand how to achieve full reproducibility and observability for complex Physical AI and robotics projects, whether you’re training yourself or collaborating with robotics teams.

*Chapters*

0:00– Introduction & fine-tuning VLA models

0:47 – Hyperparameter tuning & experiment tracking

1:27 – Rollout simulations with Isaac Sim

2:09 – Artifact tracking & model registry

3:18 – Reinforcement learning team workspace

5:23 – Alerts, monitoring & closing CTA

*View the demo projects:*

-NVIDIA GR00T VLA fine-tuning: https://wandb.ai/wandb-smle/isaacsim-nvidia-vla-crwv/sweeps/v2aohfof?nw=sxt5zec5kh

-Reinforcement learning with Isaac Lab: https://wandb.ai/wandb-smle/isaaclab-wandb-crwv?nw=o1pb2dm0rfd

*Try it yourself with our Blueprints*

-VLA fine-tuning: https://github.com/anu-wandb/w-b-nvidia-isaac-lab-vla

-Reinforcement learning with Isaac Lab: https://github.com/anu-wandb/wb-nvidia-isaac-lab

*https://wandb.ai/site/models/*