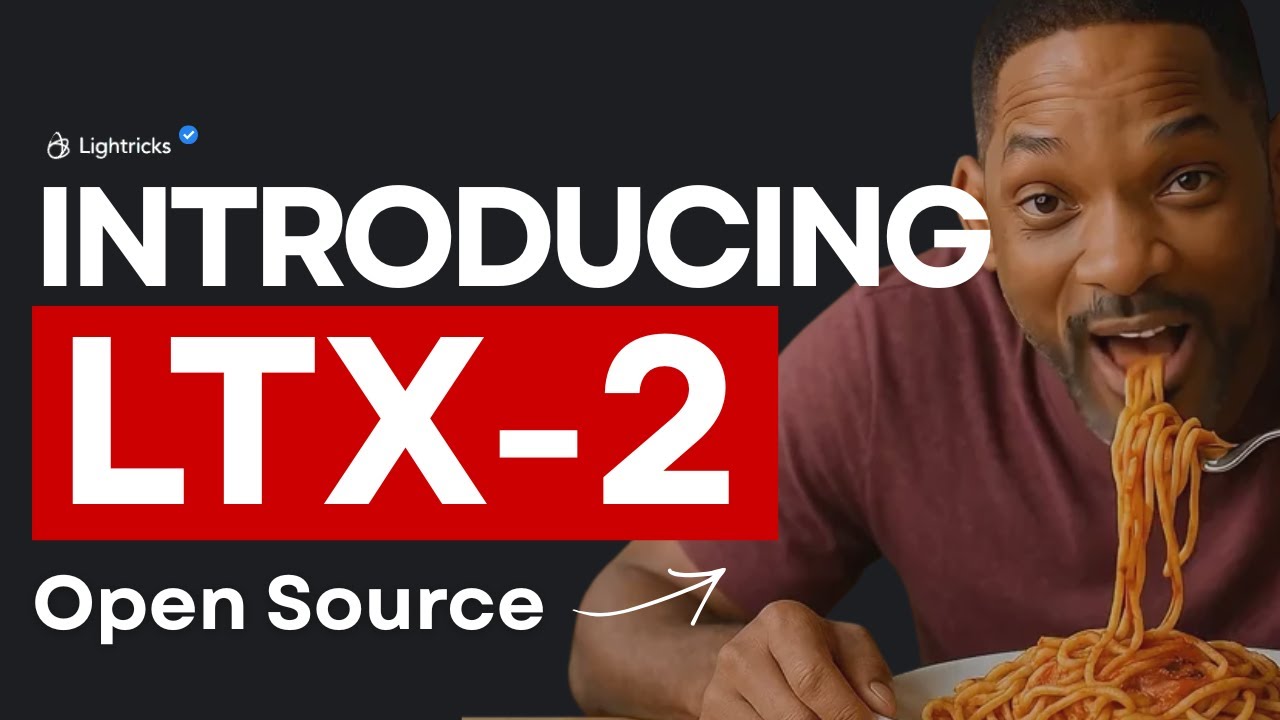

LTX-2 Is the NEW #1 Open-Source AI Video Model (Audio + Video, Runs Locally)

Machine-readable: Markdown · JSON API · Site index

Описание видео

LTX-2 is a new open-source AI model that generates video and audio together in a single pass. In this video, we break down how it works, why native audio-video sync matters, and demo anime-style scenes you can run locally.

For hands-on demos, tools, workflows, and dev-focused content, check out World of AI, our channel dedicated to building with these models: @intheworldofai

🔗 My Links:

📩 Sponsor a Video or Feature Your Product: intheuniverseofaiz@gmail.com

🔥 Become a Patron (Private Discord): /worldofai

🧠 Follow me on Twitter: https://x.com/UniverseofAIz

🌐 Website: https://www.worldzofai.com

🚨 Subscribe To The FREE AI Newsletter For Regular AI Updates: https://intheworldofai.com/

ltx 2,ltx-2,lightricks ltx2,open source ai video,ai video generator,audio video ai,text to video ai,ai video with sound,ai animation model,anime ai video,ai lip sync,ai dialogue video,ai video demo,local ai video,run ai video locally,low vram ai video,fast ai video model,ai diffusion transformer,dit video model,multimodal ai model,ai video architecture,ai research paper,ai model breakdown,universe of ai

0:00 - Intro

2:21 - How it works!

5:37 - DEMO!

8:18 - Thoughts

8:46 - Outro