Yannic Kilcher

Источник верифицирован

Технологии

Канал Yannic Kilcher — 387 видео в базе знаний, категория: tech.

В базе с March 2026

387

видео в базе

13

методичек

319K

подписчиков

387

Видео

13

Методичек

13.6M

Просмотров

Методички канала

Все 13 →Автоматизируйте перевод кода между языками программирования с помощью Unsupervised TransCoder

Освойте мощь OpenAssistant: Как использовать и внедрять передовые open-source языковые модели

Освойте архитектуру DINO: создавайте модели компьютерного зрения без меток

Освоение диффузионных моделей: как создавать изображения высокого качества, превосходящие GAN

Масштабирование математического мышления LLM: Как обучать модели с помощью GRPO и синтеза данных

Освоение архитектуры Mamba: Как проектировать эффективные модели для длинных последовательностей

Видео в базе

387 видео

I'm out of Academia

DINO: Emerging Properties in Self-Supervised Vision Transformers (Facebook AI Research Explained)

Why AI is Harder Than We Think (Machine Learning Research Paper Explained)

I COOKED A RECIPE MADE BY A.I. | Cooking with GPT-3 (Don't try this at home)

NeRF: Representing Scenes as Neural Radiance Fields for View Synthesis (ML Research Paper Explained)

I BUILT A NEURAL NETWORK IN MINECRAFT | Analog Redstone Network w/ Backprop & Optimizer (NO MODS)

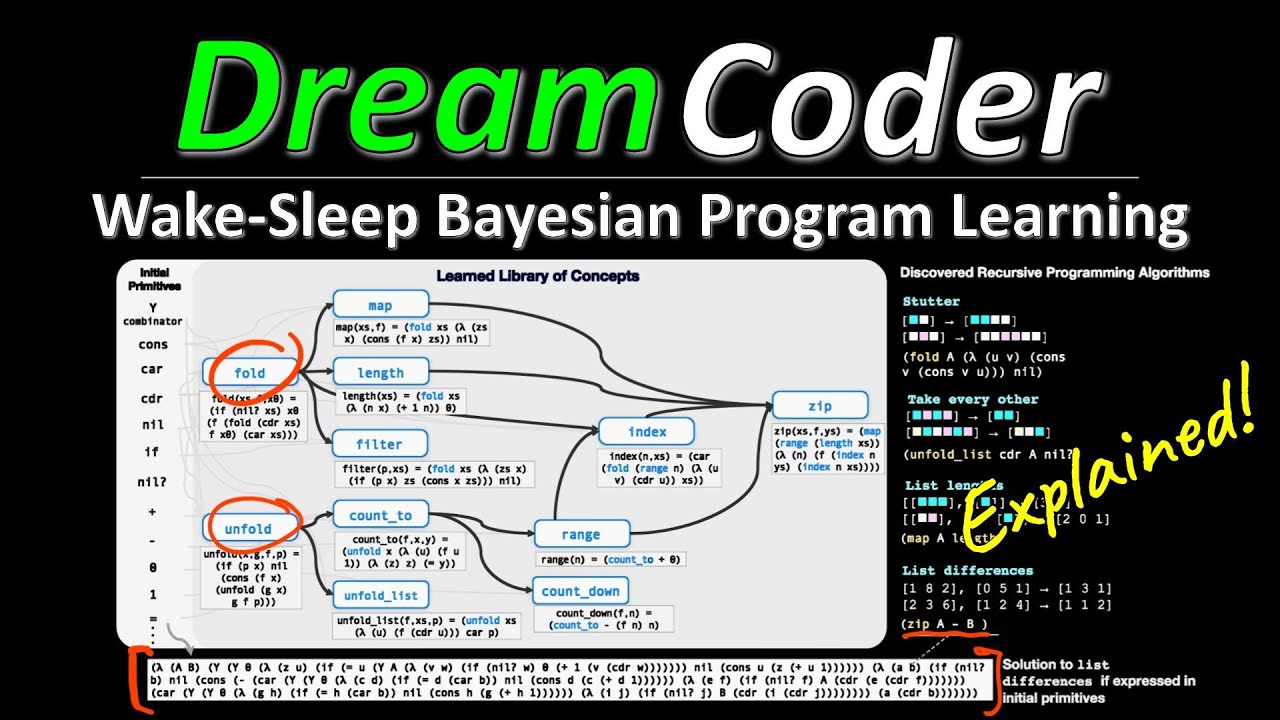

DreamCoder: Growing generalizable, interpretable knowledge with wake-sleep Bayesian program learning

Machine Learning PhD Survival Guide 2021 | Advice on Topic Selection, Papers, Conferences & more!

Is Google Translate Sexist? Gender Stereotypes in Statistical Machine Translation

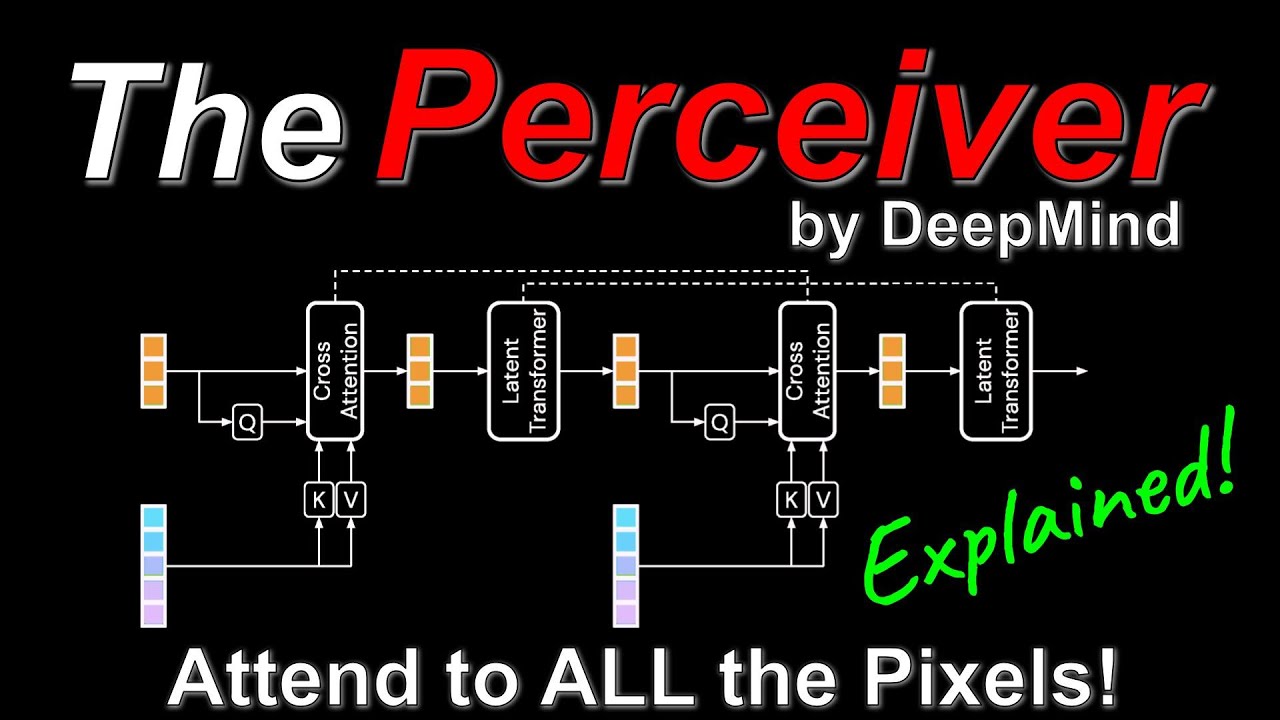

Perceiver: General Perception with Iterative Attention (Google DeepMind Research Paper Explained)

Pretrained Transformers as Universal Computation Engines (Machine Learning Research Paper Explained)

Yann LeCun - Self-Supervised Learning: The Dark Matter of Intelligence (FAIR Blog Post Explained)

Multimodal Neurons in Artificial Neural Networks (w/ OpenAI Microscope, Research Paper Explained)

GLOM: How to represent part-whole hierarchies in a neural network (Geoff Hinton's Paper Explained)

Linear Transformers Are Secretly Fast Weight Memory Systems (Machine Learning Paper Explained)

DeBERTa: Decoding-enhanced BERT with Disentangled Attention (Machine Learning Paper Explained)

Dreamer v2: Mastering Atari with Discrete World Models (Machine Learning Research Paper Explained)

TransGAN: Two Transformers Can Make One Strong GAN (Machine Learning Research Paper Explained)

NFNets: High-Performance Large-Scale Image Recognition Without Normalization (ML Paper Explained)

Nyströmformer: A Nyström-Based Algorithm for Approximating Self-Attention (AI Paper Explained)

Deep Networks Are Kernel Machines (Paper Explained)

Feedback Transformers: Addressing Some Limitations of Transformers with Feedback Memory (Explained)

SingularityNET - A Decentralized, Open Market and Network for AIs (Whitepaper Explained)