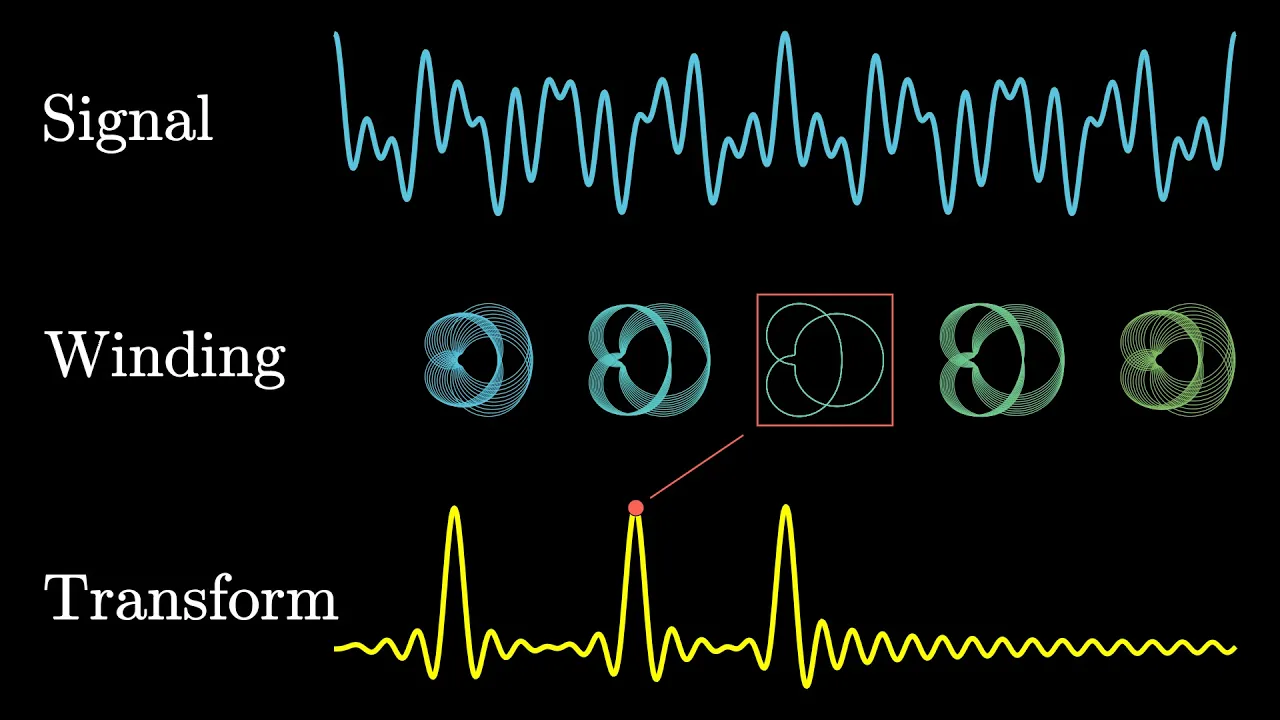

Let me pull out an old differential equations textbook that I learned from in college, and let's turn to this funny little exercise in here that asks the reader to compute 'E' to the power 'A' 't', where 'A' we're told is going to be a matrix, and the insinuation seems to be that the results will also be a matrix. It then offers several examples for what you might plug in for a. Now, take it out of context, putting a matrix into an exponent like this probably seems like total nonsense, but what it refers to is an extremely beautiful operation, and the reason it shows up in this book is that it's useful! It's used to solve a very important class of differential equations. In turn, given that the universe is often written in the language of differential equations, you see this pop up in physics all the time too, especially in quantum mechanics, where matrix exponents are littered throughout the place. They play a particularly prominent role. This has a lot to do with Schrodinger's equation, which we'll touch on a bit later, and it may also help in understanding your romantic relationships, but again, all in due time. A big part of the reason I want to cover this topic is that there is an extremely nice way to visualize what matrix exponents are actually doing using flow that not a lot of people seem to talk about, but for the bulk of this chapter, let's start by laying out what exactly the operation is, and see if we can get a feel for what kinds of problems it helps us to solve. The first thing you should know is that this is not some bizarre way to multiply the constant 'E' by itself multiple times. You would be right to call that nonsense. The actual definition is related to a certain infinite polynomial for describing real number powers of 'E', what we call its Taylor series. For example, if I took the number 2 and plugged it into this polynomial, then as you add more and more terms, each of which looks like some power of 2 divided by some factorial. The sum approaches a number near 7. 389, and this number is precisely 'E' times 'E'. If you increment this input by one, then somewhat miraculously, no matter where you started from, the effect on the output is always to multiply it by another factor of 'E'. For reasons that you're going to see in a bit, mathematicians became interested in plugging all kinds of things into this polynomial, things like complex numbers, and for our purposes today, matrices, even when those objects do not immediately make sense as exponents. What some authors do is give this infinite polynomial the name 'exp' when you plug in more exotic inputs. It's a gentle nod to the connection that this has to exponential functions in the case of real numbers, even though obviously these inputs don't make sense as exponents. However, an equally common convention is to give a much less gentle nod to the connection and just abbreviate the whole thing as 'E' to the power of whatever object you're plugging in, whether that's a complex number or a matrix or all sorts of more exotic objects. So while this equation is a theorem for real numbers, it's a definition for more exotic inputs. Cynically, you could call this a blatant abuse of notation. More charitably, you might view it as an example of the beautiful cycle between discovery and invention in math. In either case, plugging in a matrix even to a polynomial might seem a little strange, so let's be clear on what we mean here. The matrix has to have the same number of rows and columns. That way you can multiply it by itself according to the usual rules of matrix multiplication. This is what we mean by squaring it. Similarly, if you were to take that result and then multiply it by the original matrix again, this is what we mean by cubing the matrix. If you carry on like this, you can take any whole number power of a matrix, it's perfectly sensible. In this context, powers still mean exactly what you would expect, repeated multiplication. Each term in this polynomial is scaled by one divided by some factorial, and with matrices, all that means is that you multiply each component by that number. Likewise, it always makes sense to add together two matrices, this is something you again do term by term. The astute among you might ask how sensible it is to take this out to infinity, which would be a great question, one that I'm largely going to postpone the answer to, but I can show you one pretty fun example here now. Take this 2x2 matrix that has negative pi and pi sitting off its diagonal entries. Let's see what the sum gives. The first term is the identity matrix, this is actually what we mean by definition when we raise a matrix to the zeroth power. Then we add the matrix itself, which gives us the pi off the diagonal terms, and then add half of the matrix squared, and continuing on I'll have the computer keep adding more and more terms, each of which requires taking one more matrix product to get the new power, and then adding it to a running tally. And as it keeps going, it seems to be approaching a stable value, which is around negative one times the identity matrix. In this sense, we say the infinite sum equals that negative identity. By the end of this video, my hope is that this particular fact comes to make total sense to you. For any of you familiar with Euler's famous identity, this is essentially the matrix version of that. It turns out that in general, no matter what matrix you start with, as you add more and more terms, you eventually approach some stable value, though sometimes it can take quite a while before you get there. Just seeing the definition like this in isolation raises all kinds of questions, most notably, why would mathematicians and physicists be interested in torturing their poor matrices this way? What problems are they trying to solve? And if you're anything like me, a new operation is only satisfying when you have a clear view of what it's trying to do, some sense of how to predict the output based on the input before you actually crunch the numbers. How on earth could you have predicted that the matrix with pi off the diagonals results in a negative identity matrix like this? Often in math you should view the definition not as a starting point, but as a target. Contrary to the structure of textbooks, mathematicians do not start by making definitions and then listing a lot of theorems and proving them and then showing some examples. The process of discovering math typically goes the other way around. They start by chewing on specific problems, and then generalizing those problems, then coming up with constructs that might be helpful in those general cases, and only then do you write down a new definition, or extend an old one. As to what sorts of specific examples might motivate matrix exponents, two come to mind. One involving relationships, and the other quantum mechanics. Let's start with relationships.

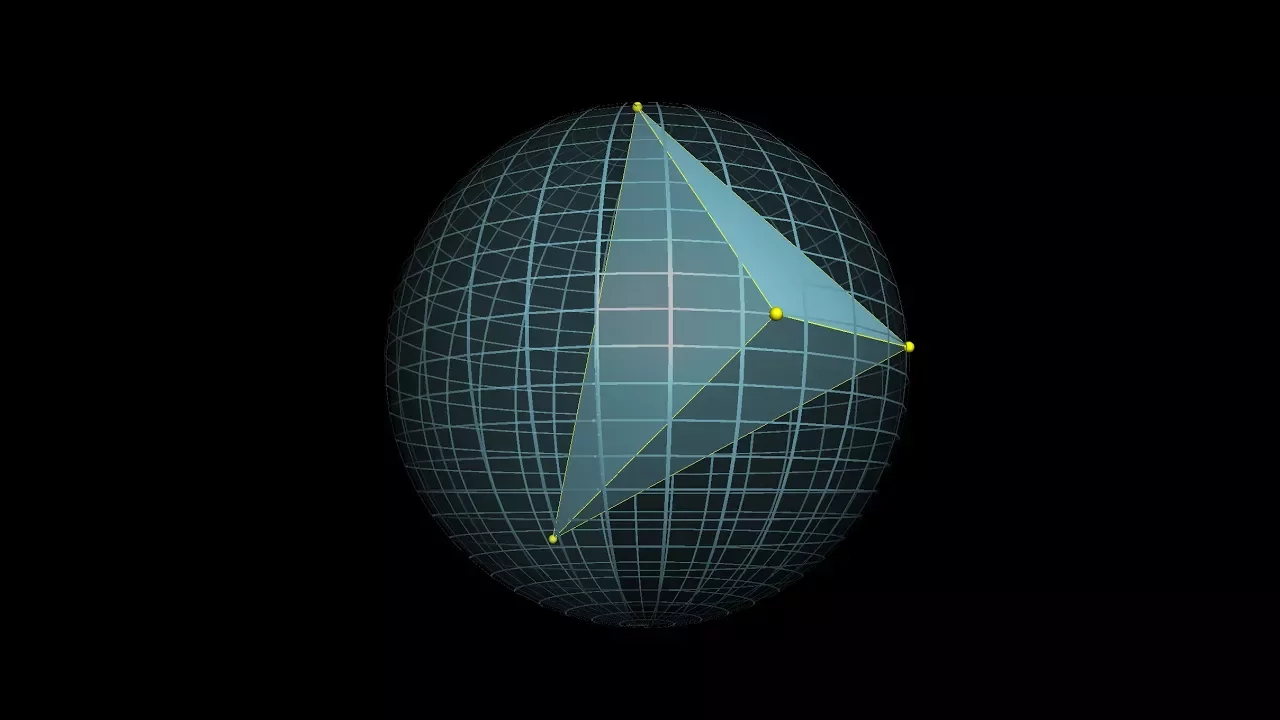

Suppose we have two lovers, let's call them Romeo and Juliet, and let's let x represent Juliet's love for Romeo, and y represent his love for her, both of which are going to be values that change with time. This is an example we actually touched on in chapter 1, based on a Steven Strogatz article, but it's okay if you didn't see that. The way their relationship works is that the rate at Juliet's love for Romeo changes, the derivative of this value, is equal to negative one times Romeo's love for her. So in other words, when Romeo is expressing cool disinterest, that's when Juliet's feelings actually increase, whereas if he becomes too infatuated, her interest will start to fade. Romeo, on the other hand, is the opposite. The rate of change of his love is equal to the of Juliet's love, so while Juliet is mad at him, his affections tend to decrease, whereas if she loves him, that's when his feelings grow. Of course, neither one of these numbers is holding still. As Romeo's love increases in response to Juliet, her equation continues to apply and drives her love down. Both of these equations always apply, from each infinitesimal point in time to the next, so every slight change to one value immediately influences the rate of change of the other. This is a system of differential equations. It's a puzzle, where your challenge is to find explicit functions for x of t and y of t that make both of these expressions true. Now, as systems of differential equations go, this one is on the simpler side, enough so that many calculus students could probably just guess at an answer. But keep in mind, it's not enough to find some pair of functions that makes this true. If you want to actually predict where Romeo and Juliet end up after some starting point, you have to make sure that your functions match the initial set of conditions at time t equals 0. More to the point, our actual goal today is to systematically solve more general versions of this equation, without guessing and checking, and it's that question that leads us to matrix exponents. Very often when you have multiple changing values like this, it's helpful to package them together as coordinates of a single point in a higher dimensional space. So for Romeo and Juliet, think of their relationship as a point in a 2D space, the x-coordinate capturing Juliet's feelings, and the y-coordinate capturing Romeo's. Sometimes it's helpful to picture this as an arrow from the origin, other times just as a point. All that really matters is that it encodes two numbers, and moving forward we'll be writing that as a column vector. And of course, this is all a function of time. You might picture the rate of change of this state, the thing that packages together the derivative of x and the derivative of y, as a kind of velocity vector in this state space, something that tugs at our point in some direction and with some magnitude that indicates how quickly it's changing. Remember, the rule here is that the rate of change of x is negative y, and the rate of change of y is x. Set up as vectors like this, we could rewrite the right hand side of this equation as a product of this matrix with the original vector xy. The top row encodes Juliet's rule, and the bottom row encodes Romeo's rule. So what we have here is a differential equation telling us that the rate of change of some vector is equal to a certain matrix times itself. In a moment we'll talk about how matrix exponentiation solves this kind of equation, but before that let me show you a simpler way that we can solve this particular system, one that uses pure geometry, and it helps set the stage for visualizing matrix exponents a bit later. This matrix from our system is a 90 degree rotation matrix. For any of you rusty on how to think about matrices as transformations, there's a video all about it on this channel, a series really. The basic idea is that when you multiply a matrix by the vector 1 0, it pulls out the first column, and similarly if you multiply it by 0 1, that pulls out the second column. What this means is that when you look at a matrix, you can read its columns as telling you what it does to these two vectors, known as the matrix. The way it acts on any other vector is a result of scaling and adding these two basis results by that vector's coordinates. So looking back at the matrix from our system, notice how from its columns we can tell it takes the first basis vector to 0 1, and the second to negative 1 0, hence why I'm calling it the 90 degree rotation matrix. What it means for our equation is that it's saying wherever Romeo and Juliet are in this space, their rate of change has to look like a 90 degree rotation of this position vector. The only way velocity can permanently be perpendicular to position like this is when you rotate around the origin in circular motion, never growing or shrinking because the rate of change has no component in the direction of the position. More specifically, since the length of this velocity vector equals the length of the position vector, then for each unit of time, the distance that this covers is equal to one radius's worth of arc length along that circle. In other words, it rotates at one radian per unit time, so in particular it would take 2 pi units of time to make a full revolution. If you want to describe this kind of rotation with a formula, we can use a more general rotation matrix, which looks like this. Again, we can read it in terms of the columns. Notice how the first column tells us that it takes that first basis vector to cos t sin t, and the second column tells us that it takes the second basis vector to negative sin t cos t, both of which are consistent with rotating by t radians. So, to solve the system, if you want to predict where Romeo and Juliet end up after t units of time, you can multiply this matrix by their initial state. The active viewers among you might also enjoy taking a moment to pause and confirm that the explicit formulas you get out of this for x of t and y of t really do satisfy the system of differential equations that we started with.

The mathematician in you might wonder if it's possible to solve not just this specific system, but equations like it for any other matrix, no matter its coefficients. To ask this question is to set yourself up to rediscover matrix exponents. The main goal for today is for you to understand how this equation lets you intuitively picture the operation which we write as e raised to a matrix, and on the flip side, how being able to compute matrix exponents lets you explicitly solve this equation. A much less whimsical example is Schrodinger's famous equation, which is the fundamental equation describing how systems in quantum mechanics change over time. It looks pretty intimidating, and I mean it's quantum mechanics so of course it will, but it's actually not that different from the Romeo-Juliet setup. This symbol here refers to a certain vector. It's a vector that packages together all the information you might care about in a system, like the various particles' positions and momenta. It's analogous to our simpler 2D vector that encoded all the information about Romeo and Juliet. The equation says that the rate at which this state vector changes looks like a certain matrix times itself. There are a number of things that make Schrodinger's equation notably more complicated, but in the back of your mind you might think of it as a target point that you and I can build up to, with simpler examples like Romeo and Juliet offering more friendly stepping stones along the way. Actually the simplest example, which is tied to ordinary real number powers of e, is the one-dimensional case. This is when you have a single changing value, and its rate of change equals some constant times itself. So the bigger the value, the faster it grows. Most people are more comfortable visualizing this with a graph, where the higher the value of the graph, the steeper its slope, resulting in this ever-steepening upward curve. Just keep in mind that when we get to higher dimensional variance, graphs are a lot less helpful. This is a highly important equation in its own right. It's a very powerful concept when the rate of change of a value is proportional to the value itself. This is the equation governing things like compound interest, or the early stages of population growth before the effects of limited resources kick in, or the early stages of an epidemic while most of the population is susceptible. Calculus students all learn about how the derivative of e^(rt) is r *e^(rt). In other words, this self-reinforcing growth phenomenon is the same thing as exponential growth, and e^(rt) solves this equation. Actually, a better way to think about it is that there are many different solutions to this equation, one for each initial condition, something like an initial investment size or an initial population, which we'll just call x0. Notice, by the way, how the higher the value for x0, the higher the initial slope of the resulting solution, which should make complete sense given the equation. The function e^(rt) is just a solution when the initial condition is 1, but if you multiply by any other initial condition, you get a new function which still satisfies this property. It still has a derivative which is r times itself, but this time it starts at x0 since e to the 0 is 1. This is worth highlighting before we generalize to more dimensions. Do not think of the exponential part as being a solution in and of itself. Think of it as something that acts on an initial condition in order to give a solution. You see, up in the two-dimensional case, when we have a changing vector whose rate of change is constrained to be some matrix times itself, what the solution looks like is also an exponential term acting on a given initial condition, but the exponential part in that case will produce a matrix that changes with time, and the initial condition is a vector. In fact, you should think of the definition of matrix exponentiation as being heavily motivated by making sure that this fact is true. For example, if we look back at the system that popped up with Romeo and Juliet, the claim now is that solutions look like e raised to this 0, negative 1, 0 matrix all times time multiplied by some initial condition. But we've already seen the solution in this case, we know it looks like a rotation matrix times the initial condition. So let's take a moment to roll up our sleeves and compute the exponential term using the definition that I mentioned at the start, and see if it lines up. Remember, writing e to the power of a matrix is a shorthand, a shorthand for plugging it in to this long infinite polynomial, the Taylor series for e to the x. I know it might seem pretty complicated to do this, but trust me, it's very satisfying how this particular one turns out. If you actually sit down and you compute successive powers of this matrix, what you'd notice is that they fall into a cycling pattern every four iterations. This should make sense given that we know it's a 90 degree rotation matrix. So when you add together all infinitely many matrices term by term, each term in the result looks like a polynomial in t with some nice cycling pattern in its coefficients, all of them scaled by the relevant factorial term. Those of you who are savvy with Taylor series might be able to recognize that each one of these components is the Taylor series for either sine or cosine, though in that top right corner's case it's actually negative sine. So what we get from the computation is exactly the rotation matrix we had from before. To me, this is extremely beautiful. We have two completely different ways of reasoning about the same system, and they give us the same answer. It's reassuring that they do, but it's wild just how different the mode of thought is when you're chugging through this polynomial versus when you're geometrically reasoning about what a velocity perpendicular to a position must imply. Hopefully the fact that these line up inspires a little confidence in the claim that matrix exponents really do solve systems like this. This explains the computation we saw at the start, by the way, with the matrix that had negative pi and pi off the diagonals, producing the negative identity. This expression is exponentiating a 90 degree rotation matrix times pi, which is another way to describe what the Romeo-Juliet setup does after pi units of time. As we now know, that has the effect of rotating everything 180 degrees in this state space, which is the same as multiplying by negative 1.